Learn Before

Why Gradient descent might fail?

Gradient Descent is an algorithm which is designed to find the optimal points, but these optimal points are not necessarily global. And yes if it happens that it diverges from a local location it may converge to another optimal point but its probability is not too much.

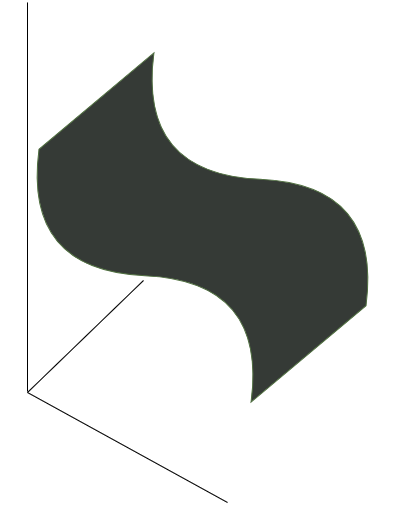

Consider the following "recliner chair" type of function(image below).

Obviously, this can be constructed so that there is a range in the middle where the gradient is the 0 vector, casuing the fail to find global optima.

0

1

Tags

Data Science

Related

Gradient Descent Reference

Linear Regression and Gradient Descent

Numerical Approximation of Gradients

Gradient Checking

(Batch) Gradient Descent (Deep Learning Optimization Algorithm)

Gradient Descent Explained

Why Gradient descent might fail?

A Chat with Andrew on MLOps: From Model-centric to Data-centric AI

Big Data to Good Data: Andrew Ng Urges ML Community To Be More Data-Centric and Less Model-Centric

MLOps: Data-centric and Model-centric approaches

Critical Points

First-order Optimization Algorithm

Method of Steepest Descent

Second-Order Gradient Methods

Gradient Descent Explanation

Gradient Descent Variants

Notes about gradient descent

Suppose you have built a neural network. You decide to initialize the weights and biases to be zero. Which of the following statements is true?

Vanishing/exploding gradient

BERT Training Process

Objective Function

Distributed Training

The Problem with Constant Initialization

Objective Function Change Bounds in Gradient Descent

One-Dimensional Gradient Descent

Multivariate Gradient Descent

Second-Order Optimization Algorithm

Average Objective Function in Deep Learning

Accelerated Gradient Methods