Learn Before

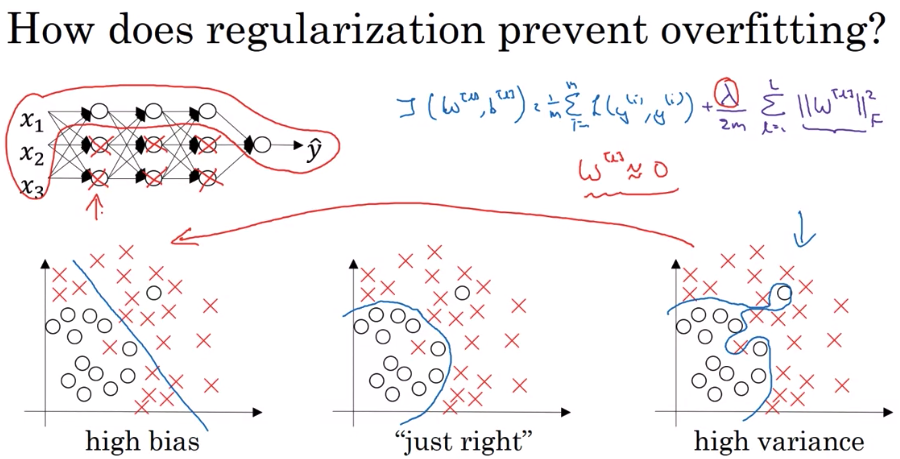

Why does regularization prevent overfitting?

While adding an extra term penalizes the weight matrix from becoming too large, why does this prevent overfitting? This is because if lambda becomes very large the weight of the matrices is incentivized to be close to 0. In other words, you are zeroing the impact of hidden units so that a simpler network is the result. The hidden units are still there, but now they have a much smaller effect. With a small W and and small Z, the activation function will likely be fairly linear.

0

3

Contributors are:

Who are from:

Tags

Data Science

Related

Why does regularization prevent overfitting?

Popular Regularization Techniques in Deep Learning

Human Level Performance: Based on the evidence below, which two of the following four options seem the most promising to try?

Local Constancy and Smoothness Priors

Parameter Sharing

Parameter Tying

L1 regularization and L2 regularization

MTL as a Regularizer

Parameter Penalties in Classical Regularization

Regularization Makes Larger Models Safer Against Overfitting