Learn Before

Relation

Why use a non-Linear Activation function

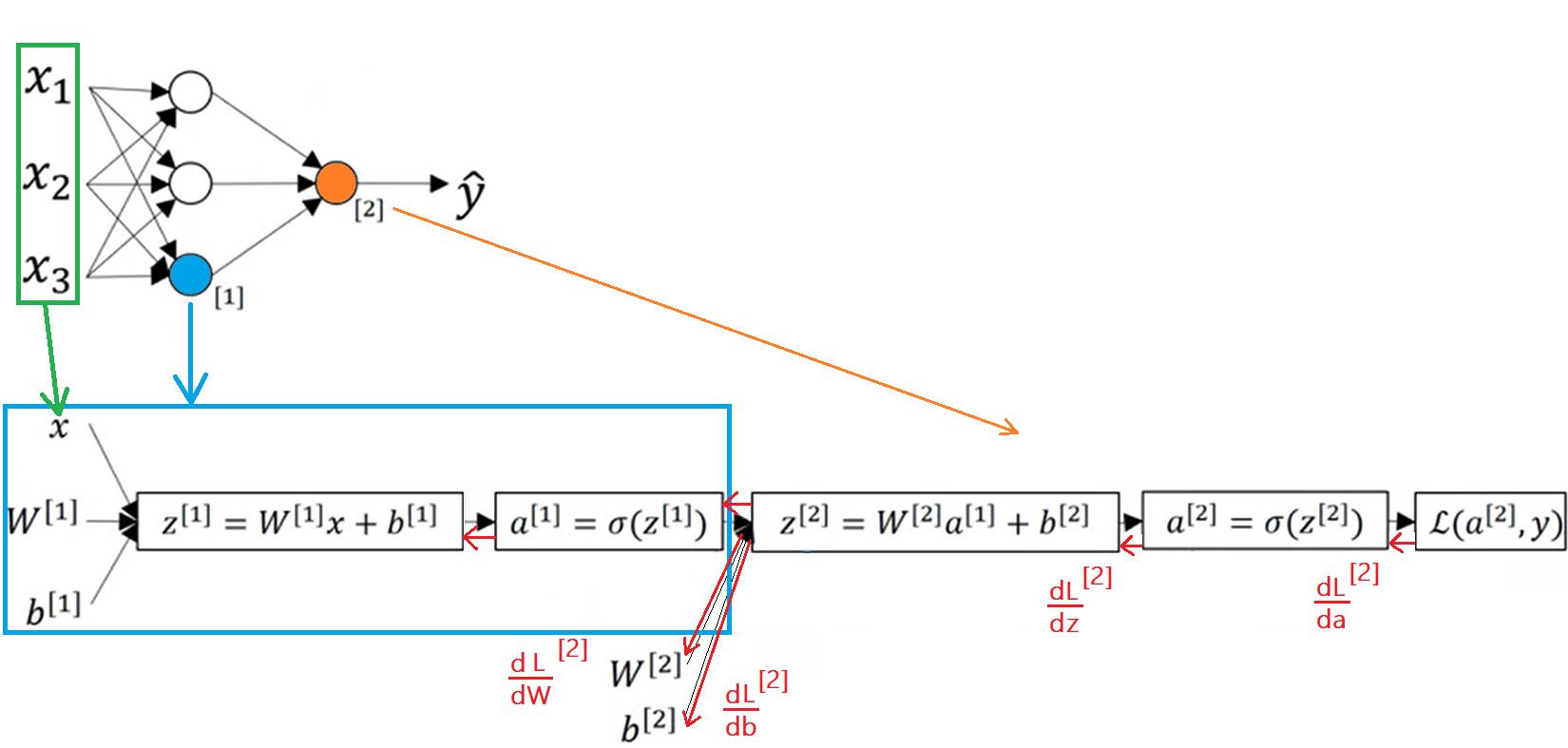

If only linear activation functions are used in a neural network then the network is no more expressive than standard logistic regression without any hidden layers, because then all that is being done is linear transformations of the input. Thus, the network can not be more expressive than linear regression. It's by adding nonlinearity that we are able to approximate other kinds of more complex functions. If :

0

1

Updated 2021-03-12

Contributors are:

Who are from:

Tags

Data Science