Learn Before

Application of RoPE to Token Embeddings

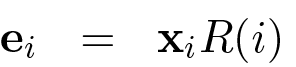

The final embedding for a token at position , denoted as , is obtained by applying the Rotary Positional Embedding (RoPE) transformation to the token's original embedding . This is represented by the function , where is the position and represents the rotational frequency parameters. The formula is:

0

1

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Ch.3 Prompting - Foundations of Large Language Models

Related

Comparison of Rotary and Sinusoidal Embeddings

Conceptual Illustration of RoPE's Rotational Mechanism

Example of RoPE Capturing Relative Positional Information

Application of RoPE to d-dimensional Embeddings

Application of RoPE to Token Embeddings

RoPE as a Linear Combination of Periodic Functions

Consider two distinct methods for encoding a token's position within a sequence. Method A calculates a unique positional vector and adds it to the token's embedding. Method B applies a rotational transformation to the token's embedding, with the angle of rotation determined by the token's position. Based on these descriptions, which statement best analyzes a fundamental difference in how these two methods integrate positional context?

Positional Information in Vector Transformations

Analyzing Relative Positional Information

Selecting a Positional Strategy for a Long-Context Retrofit

Diagnosing Long-Context Failures Across Positional Schemes

Choosing and Justifying a Positional Retrofit Under Long-Context and Latency Constraints

Long-Context Retrofit Decision: RoPE Base Scaling vs ALiBi vs T5 Relative Bias

Post-Retrofit Regression: Separating Positional-Method Effects from Scaling Choices

Root-Cause Analysis of Long-Context Degradation After a Positional-Encoding Retrofit

You are reviewing a proposal to extend a productio...

You’re reviewing three proposed positional mechani...

Your team is extending a pretrained Transformer fr...

You’re debugging a long-context retrofit of a pret...

Advantage of Rotary over Sinusoidal Embeddings for Long Sequences

Formula for Multiplicative Positional Embeddings

Angle Preservation in Rotary Embeddings

Learn After

Application of RoPE Rotation to a 2D Vector

RoPE Frequency Parameters

Definition of the 2x2 RoPE Rotation Matrix Block

RoPE Parameter Vector Definition

Definition of RoPE Parameter Vector (θ)

A language model encodes token positions by applying a unique, position-dependent rotational transformation to each token's initial embedding. The final, position-aware embedding for a token is the result of this transformation. If the exact same token (e.g., 'model') appears at position 4 and later at position 12 in a sequence, which statement best describes the relationship between their final embeddings, and ?

RoPE 2D Vector Rotation Formula

Formula for RoPE-Encoded Token Embedding

Uniqueness of RoPE-based Embeddings

Debugging a RoPE Implementation