Background (Accelerating Human Learning With Deep Reinforcement Learning)

Here the authors describe three previous probabilistic methods of the human memory:

Exponential Forgetting Curve - one of the oldest human memory models. The authors use parameterization where user either forgets information completely or retains it and the probability of the recall can be expressed with this function:

"where θ ∈ R+ is the item difficulty, D ∈ R+ is the time elapsed since the item was last reviewed by the student, and S ∈ R+ is the student’s memory strength for the item." S is set to the amount of trials.

$$Z ~ Bernoulli(exp(-\theta \frac {D}{S}))$$

Half-Life Regression - here unlike exponential forgetting curve we don't have the parameter for the difficulty here, instead here we have ~x ∈ X which contains information about student's study history and model parameters θ ∈ Θ . This is how formula looks like:

In order to encode the number of attempts, correct/incorrect answers and the identity the authors set . By dropping difficulty of the item we are not losing any information as the difficulty "is absorbed into the memory strength via the coefficients of the item identity indicator features."

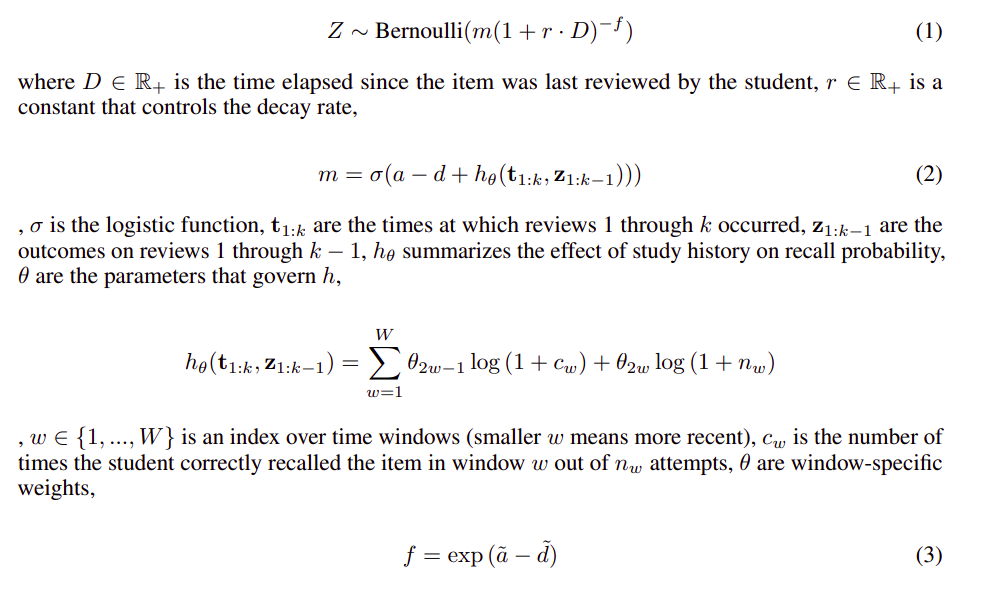

Generalized Power Law (paper describes this part precisely, couldn't be broken down into smaller parts) here we have different likelihood for the recall:

On the last formula from the picture we have a and d which are parameters for the student ability and the item difficulty.

0

1

Tags

Data Science

Related

Reference for Accelerating Human Learning with Deep Reinforcement Learning

Introduction (Accelerating Human Learning With Deep Reinforcement Learning)

Related Work (Accelerating Human Learning With Deep Reinforcement Learning)

Spaced Repetition via Model-Free Reinforcement Learning (Accelerating Human Learning With Deep Reinforcement Learning)

Discussion (Accelerating Human Learning With Deep Reinforcement Learning)

Experiments (Accelerating Human Learning With Deep Reinforcement Learning)

Background (Accelerating Human Learning With Deep Reinforcement Learning)

Motivation and Problem Description (Using deep reinforcement learning for personalizing review sessions on e-learning platforms with spaced repetition)

Background (Accelerating Human Learning With Deep Reinforcement Learning)

Spaced Repetition

Leitner System

Supermemo System

Reinforcement Learning

Intelligent Tutoring Systems (Using deep reinforcement learning for personalizing review sessions on e-learning platforms with spaced repetition)

Relation between Tutoring Systems and Student learning

Trust Region Policy Optimization

Truncated Natural Policy Gradient

Recurrent Neural Network (RNN)