Batch Normalization

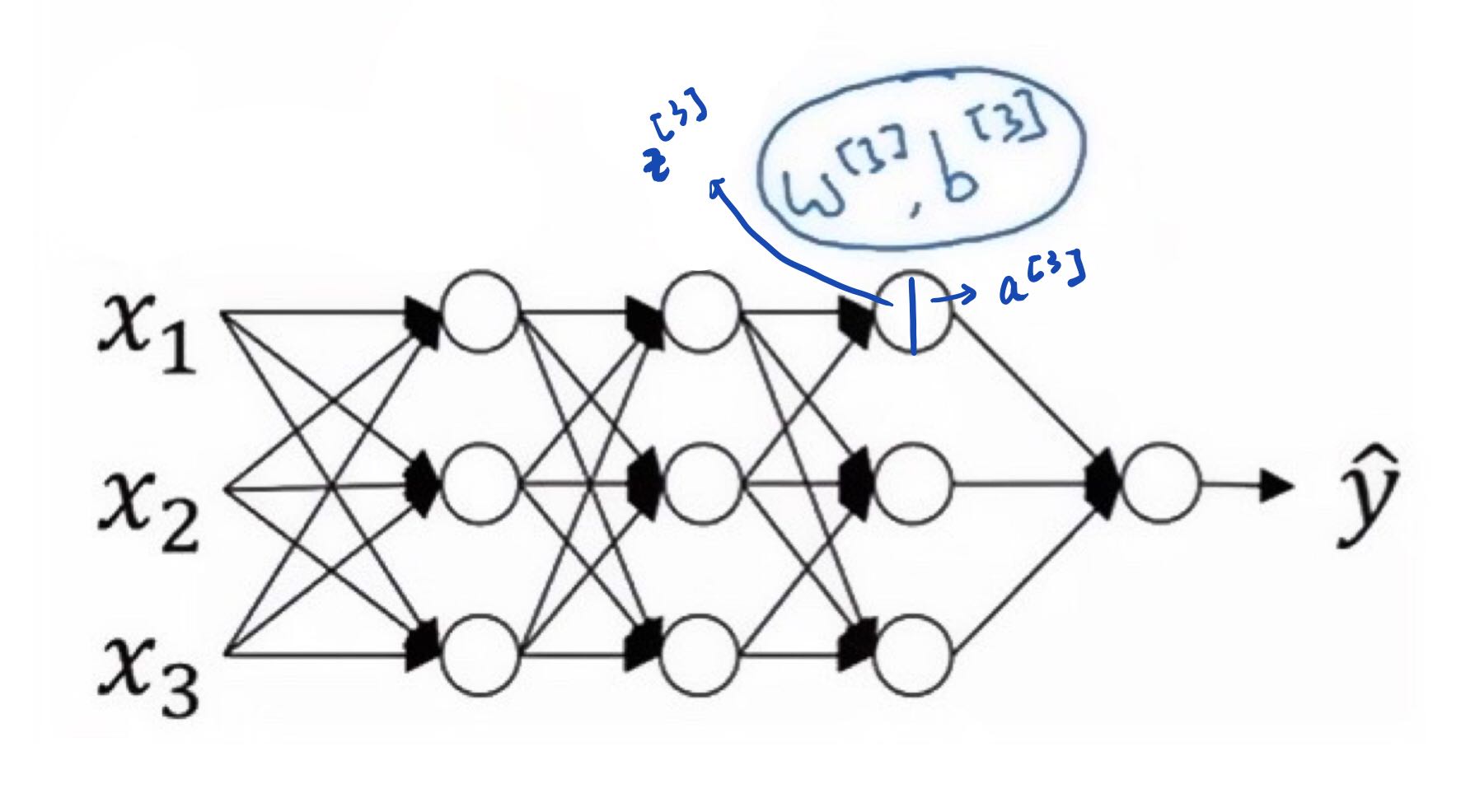

Batch normalization is a technique designed to accelerate and stabilize the training of deep neural networks. Mechanistically, it centers and rescales the intermediate layer activations back to a controlled mean and variance, preventing their distributions from diverging across layers and over time. By keeping these intermediate values on a comparable scale, batch normalization enables the use of more aggressive learning rates. The technique was originally motivated by the concept of covariate shift applied to internal layers, but the hypothesis that it works by reducing this so-called internal covariate shift has since been challenged and does not appear to be a valid explanation for its effectiveness. Although intuitively thought to make the optimization landscape smoother, the precise mechanism by which batch normalization aids training remains an open research question. Despite this theoretical uncertainty, batch normalization has proven indispensable in practice, being applied in nearly all deployed image classifiers and earning the original paper tens of thousands of citations.

0

2

Contributors are:

Who are from:

Tags

Data Science

D2L

Dive into Deep Learning @ D2L

Related

Activation Functions in Neural Networks

Matrix Degeneration

Batch Normalization

Depth and Width for Neural Networks

Dropout

Neural Network Learning Rate

Epochs in Machine Learning

Activation Functions in Neural Networks

Deep Learning Optimizer Algorithms

Deep Learning Weight Initialization

Hyperparameters Tuning Methods in Deep Learning

Difference between Model Parameter and Model Hyperparameter

Regularization Constant

Batch Normalization

Feature scaling greatly affects which of the following supervised machine learning methods?

Feature Standardization and Function Complexity

Batch Normalization

Reduction of Covariate Shift via Layer Normalization

Medical Diagnostics Example of Covariate Shift

Batch Normalization