Learn Before

Relation

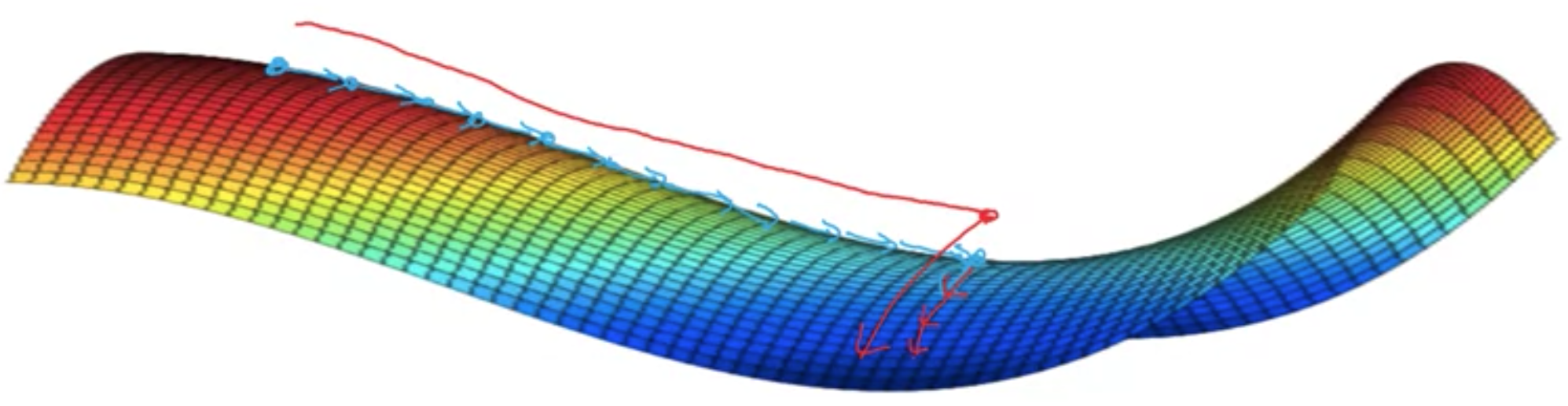

Challenges with Deep Learning Optimizer Algorithms

- Local optima: it's actually unlikely to get stuck in local optima.

- Cliffs: on the face of an extremely steep cliff structure, the gradient update step can move the parameters extremely far

- Inexact Gradients: sometimes approximation is needed for gradients

- Plateaus: low cost function slope (close to flat) makes learning slow.

0

1

Updated 2026-05-15

Contributors are:

Who are from:

Tags

Data Science

Related

Mini-Batch Gradient Descent

Gradient Descent with Momentum

An overview of gradient descent optimization algorithms

Learning Rate Decay

Gradient Descent

Adam (Deep Learning Optimization Algorithm)

RMSprop (Deep Learning Optimization Algorithm)

Nesterov momentum (Deep Learning Optimization Algorithm)

Challenges with Deep Learning Optimizer Algorithms

Adam optimization algorithm

Difference between Adam and SGD

Adagrad

Adadelta