Comparison of Prefix Language Modeling and Causal Language Modeling

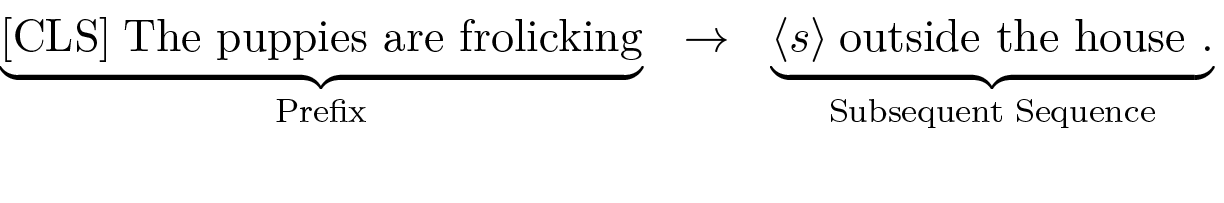

The primary distinction between Prefix Language Modeling (PrefixLM) and Causal Language Modeling (CLM) lies in how context is processed and generation is initiated. In standard CLM, the entire text sequence is generated autoregressively, with each token prediction conditioned on all preceding tokens starting from the very beginning. In contrast, PrefixLM splits the task: an encoder first processes an initial prefix non-causally (all at once) to create a contextual representation. Then, a decoder uses this context to autoregressively generate only the subsequent part of the sequence.

0

1

Tags

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

A research team aims to pre-train a sequence-to-sequence model for various text generation tasks using a massive, unlabeled text corpus. Their proposed training strategy is as follows: for each document, they will randomly split it into an initial segment and a concluding segment. The model's encoder will process the entire initial segment at once to form a contextual understanding. The model will then be trained to use its decoder to generate the concluding segment, conditioned on the encoder's output. Which of the following statements provides the most accurate evaluation of this strategy for the team's objective?

Comparison of Prefix Language Modeling and Causal Language Modeling

You are preparing a single training example for an encoder-decoder model using a self-supervised objective on a large, unlabeled text document. Arrange the following actions into the correct chronological sequence for one complete training step.

Analyzing a Flawed Pre-training Strategy

Learn After

Analyzing Language Model Behavior

A language model is given the initial text 'The scientist, renowned for groundbreaking work in physics, discovered a new particle that' to use as context for generating a continuation. During the processing of this initial text, the model builds a contextual representation where the understanding of the word 'scientist' is simultaneously informed by the words 'physics' and 'particle'. Which modeling approach is characterized by this specific method of processing the initial context?

Analyzing Context Processing Mechanisms