Learn Before

Training Encoder-Decoder Models with Prefix Language Modeling

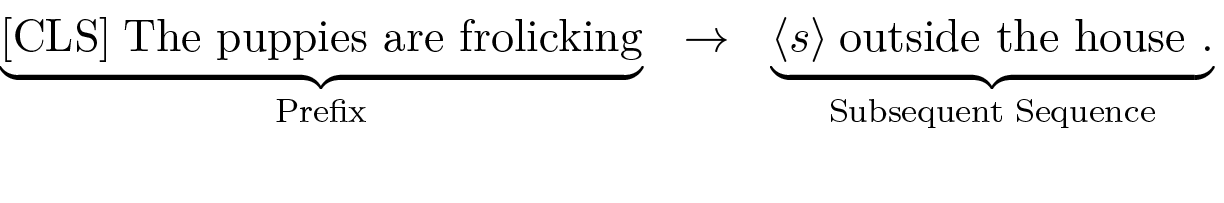

Encoder-decoder models can be trained directly using the Prefix Language Modeling objective. In this process, the encoder learns to build a contextual representation from a given prefix, while the decoder is trained to generate the subsequent text based on the encoder's understanding. This method is particularly effective for large-scale pre-training because it allows for the straightforward creation of a vast number of training examples from readily available unlabeled text.

0

1

References

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Tags

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Comparison of Prefix and Causal Language Modeling

Example of Prefix Language Modeling Input Format

Training Encoder-Decoder Models with Prefix Language Modeling

Consider a model architecture composed of an encoder and a decoder, trained with a self-supervised objective to complete a text sequence given an initial prefix. Which statement best analyzes the distinct processing methods of the encoder and decoder for this task?

Processing a Text Sequence

In a self-supervised text generation task, a model is given an initial sequence of words (a prefix) and trained to produce the words that follow. For an architecture that uses two distinct components to accomplish this, match each component or data piece with its primary role or characteristic.

Example of Prefix Language Modeling

Learn After

A research team aims to pre-train a sequence-to-sequence model for various text generation tasks using a massive, unlabeled text corpus. Their proposed training strategy is as follows: for each document, they will randomly split it into an initial segment and a concluding segment. The model's encoder will process the entire initial segment at once to form a contextual understanding. The model will then be trained to use its decoder to generate the concluding segment, conditioned on the encoder's output. Which of the following statements provides the most accurate evaluation of this strategy for the team's objective?

Comparison of Prefix Language Modeling and Causal Language Modeling

You are preparing a single training example for an encoder-decoder model using a self-supervised objective on a large, unlabeled text document. Arrange the following actions into the correct chronological sequence for one complete training step.

Analyzing a Flawed Pre-training Strategy