Learn Before

Example of Prefix Language Modeling Input Format

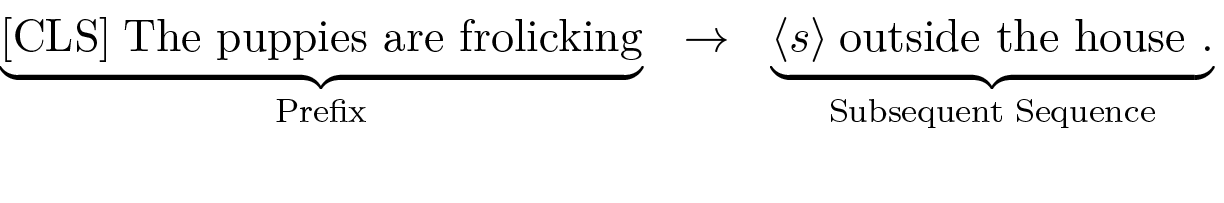

In Prefix Language Modeling, an input text is partitioned into two parts: a prefix and a subsequent sequence. The prefix serves as the context processed entirely by the encoder, while the subsequent sequence is the target that the decoder autoregressively generates. An illustration of this structure is:

In this format, the input prefix begins with a special token such as [CLS], and the target subsequent sequence is initiated by a start-of-sequence token like .

0

1

Tags

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Comparison of Prefix and Causal Language Modeling

Example of Prefix Language Modeling Input Format

Training Encoder-Decoder Models with Prefix Language Modeling

Consider a model architecture composed of an encoder and a decoder, trained with a self-supervised objective to complete a text sequence given an initial prefix. Which statement best analyzes the distinct processing methods of the encoder and decoder for this task?

Processing a Text Sequence

In a self-supervised text generation task, a model is given an initial sequence of words (a prefix) and trained to produce the words that follow. For an architecture that uses two distinct components to accomplish this, match each component or data piece with its primary role or characteristic.

Example of Prefix Language Modeling

Learn After

A team is tasked with adapting a large, pre-trained language model to summarize legal documents. One developer designs a method where each summarization request includes a detailed set of instructions and examples of high-quality summaries, which are provided to the original, unchanged model. Another developer uses a large dataset of legal documents and their corresponding summaries to make small, permanent adjustments to the model's internal configuration before deploying it. What is the most significant difference between these two approaches regarding the pre-trained model itself?

Analysis of Activation Function Choice in Transformer Architectures

A researcher is preparing a training example for a language model that uses a prefix-based objective. The goal is for the model to learn to complete the sentence 'The sun is shining brightly in the sky.' after being given the first three words as context. Which of the following options correctly partitions the sentence into a prefix and a subsequent sequence for this task?