Learn Before

Denoising Autoencoders

- Traditionally, autoencoders minimize some function where is a loss function penalizing for being dissimilar from , such as the norm of their difference.

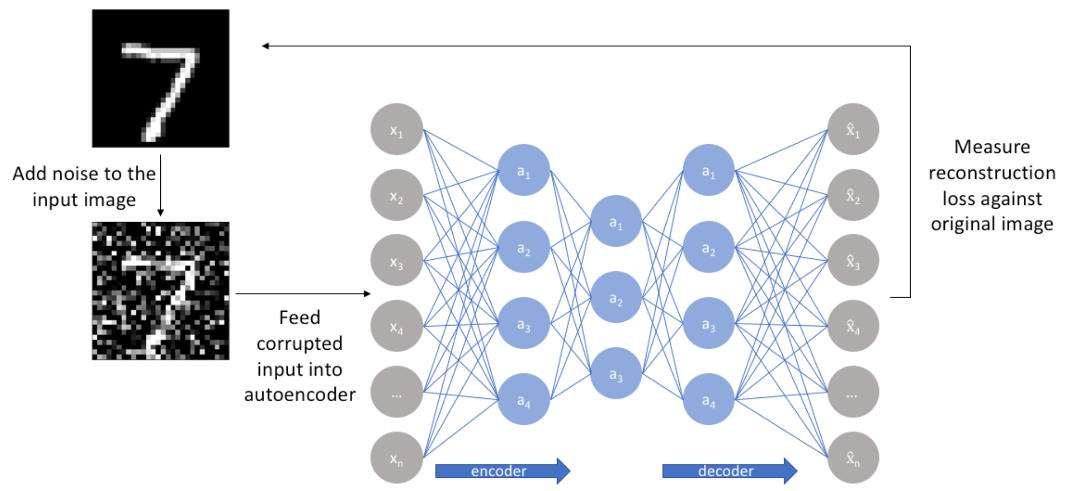

- A denoising auto encoder (DAE) instead minimizes where is a copy of that has been corrupted by some form of noise. Denoising autoencoders must therefore undo this corruption rather than simply copying theirinput.

- Two assumptions are inherent to this approach: 1)Higher level representations are relatively stable and robust to the corruption of the input; 2) To perform denoising well, the model needs to extract features that capture useful structure in the input distribution.

- In other words, denoising is advocated as a training criterion for learning to extract useful features that will constitute better higher level representations of the input.

0

1

Contributors are:

Who are from:

Tags

Data Science

Foundations of Large Language Models Course

Computing Sciences

Related

What does the Autoencoder try to do ?

Putting it all together - AutoEncoder Code

Problems With Autoencoders

Reference to the AutoEncoder Code

Autoencoder Decoder Code

Autoencoder Encoder Sample Code

Denoising Autoencoders

Sparse Autoencoders

Undercomplete Autoencoders

Overcomplete Autoencoders

Regularizing Autoencoder

Autoencoder Depth

Learning Manifolds Using Autoencoder

Drawing Samples From Autoencoders

Learn After

Introduction of Denoising Autoencoders

Vector Field of Denoising Autoencoders

History of MLPs for Denoising Dates

Training Encoder-Decoder Models with a Denoising Autoencoding Objective

An engineer trains two autoencoder models on a large dataset of clean, high-resolution images. Model A is a standard autoencoder, trained to reconstruct the original images perfectly. Model B is a denoising autoencoder, trained to reconstruct the original clean images from input images that have been intentionally corrupted with random noise (e.g., salt-and-pepper noise). After training, both models are evaluated on their ability to reconstruct a new set of images that have a different, unseen type of corruption (e.g., a slight blur). Based on their training objectives, which model is expected to perform better on this new task, and why?

A key modification to the standard autoencoder training process is the introduction of a 'corruption' step to create a more robust model. Arrange the following steps to accurately describe a single training iteration for this modified approach, which aims to reconstruct an original data point from a noisy version of it.

An autoencoder model is trained on a large dataset of facial images. During each training step, a clean image (

x) is taken, a random rectangular section of it is completely blacked out to create a corrupted version (~x), and the model is tasked with reconstructing the original, clean image (x) from the corrupted input (~x). Which of the following best explains what the model must learn about the data distribution to succeed at this specific task?