Learn Before

Concept

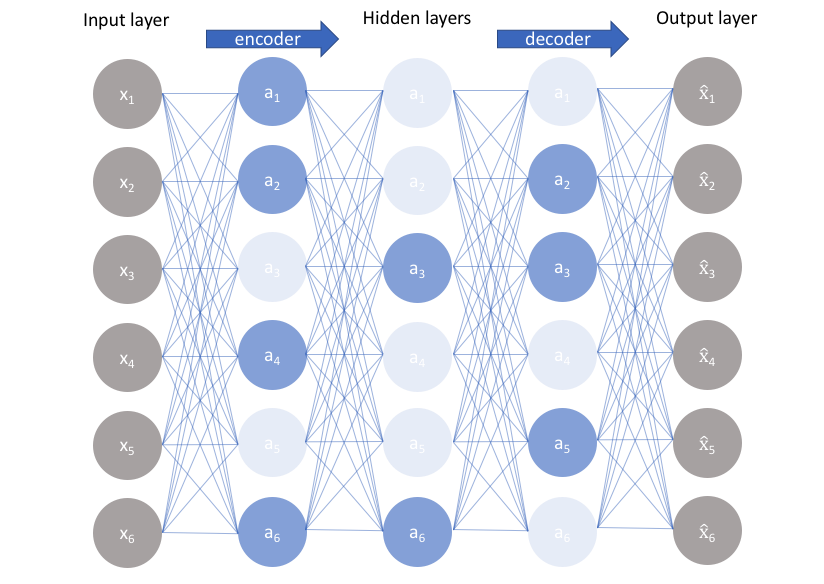

Sparse Autoencoders

- It is an autoencoder that trains with the reconstruction error involving a sparsity penalty on the code layer : , where is the decoder output, and the encoder output.

- It is a framework that approximates the maximum likelihood training of a generative model that has hidden layers.

- A model with visible variables and hidden variables , with an explicit joint distribution . The log-likelihood can be decomposed as: We can think of the autoencoder as approximating this sum with a point estimate for just one highly likely value for , with this chosen , we are maximizing Expressing the log-prior as an absolute value penalty, we obtain

0

1

Updated 2021-07-23

Tags

Data Science

Related

What does the Autoencoder try to do ?

Putting it all together - AutoEncoder Code

Problems With Autoencoders

Reference to the AutoEncoder Code

Autoencoder Decoder Code

Autoencoder Encoder Sample Code

Denoising Autoencoders

Sparse Autoencoders

Undercomplete Autoencoders

Overcomplete Autoencoders

Regularizing Autoencoder

Autoencoder Depth

Learning Manifolds Using Autoencoder

Drawing Samples From Autoencoders