Learn Before

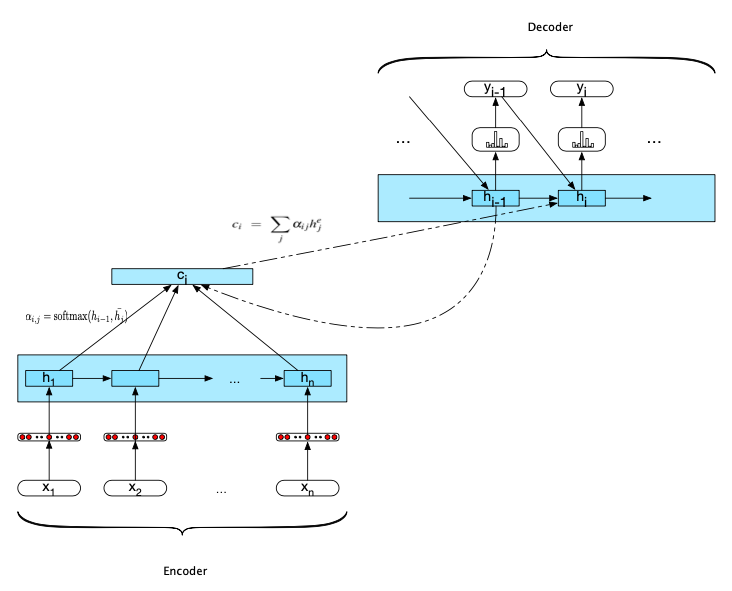

Encoder-decoder network with attention

Computing the value for current decode is based on the previous hidden state, the previous word generated, and the current context vector. This context vector is derived from the attention computation based on comparing the previous hidden state to all of the encoder hidden states.

0

1

Contributors are:

Who are from:

Tags

Data Science

D2L

Dive into Deep Learning @ D2L

Learn After

Neural Machine Translation by Jointly Learning to Align and Translate

Effective Approaches to Attention-based Neural Machine Translation

Attention Motivation

Example of how Attention is used in Machine Translation

The Illustrated Transformer

Attention Is All You Need

Attention is all you need; Attentional Neural Network Models | Łukasz Kaiser | Masterclass

Tensor2Tensor Intro

Transformer model

Transformer

Efficient Transformers: A Survey

Evaluation of Efficient Transformers