Learn Before

Transformer model

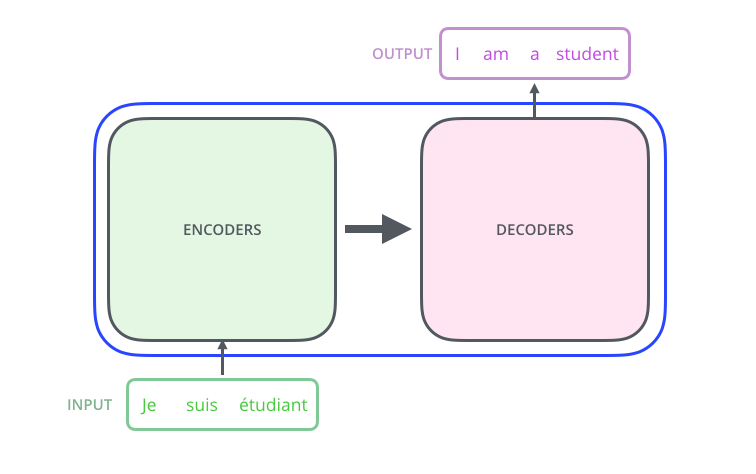

The concept of attention helped dramatically improve the seq_to_seq model. There was a lot of improvement and development on that concept since those two main papers on attention got released. One of them is the paper “Attention is All you Need.” The paper introduced a model called “Transformer” that uses only attention mechanisms to work with sequential data - no RNNs. The model they created is subject to parallelization and works very well with GPU. One of the main problems with RNN is that the algorithm is hardly parallelizable because before we can evaluate one time stamp in the encoder we need the previous one. You will see how we can easily parallelize the Transformer model. As before let’s consider the example of machine translation. As before the model consists from two parts:

- Encoders

- Decoders

1

2

Tags

Data Science

Related

Neural Machine Translation by Jointly Learning to Align and Translate

Effective Approaches to Attention-based Neural Machine Translation

Attention Motivation

Example of how Attention is used in Machine Translation

The Illustrated Transformer

Attention Is All You Need

Attention is all you need; Attentional Neural Network Models | Łukasz Kaiser | Masterclass

Tensor2Tensor Intro

Transformer model

Transformer

Efficient Transformers: A Survey

Evaluation of Efficient Transformers