Learn Before

Transformer Decoder

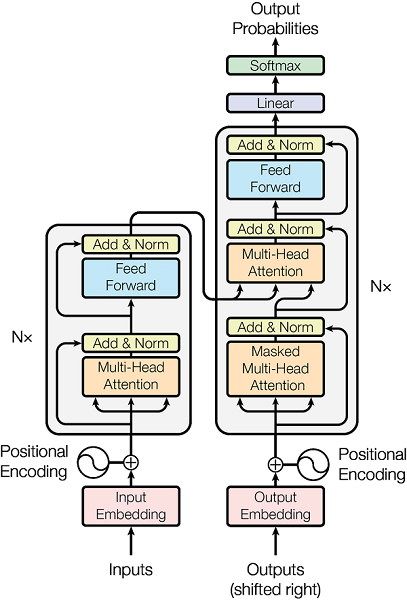

Here on the image you can see the structure of the decoder which is very similar to the encoder part we just described with only difference that in this case we pass K, V from the input to the each decoder attention layer(encoder -decoder layer) and now we take queries from previous decoder layers and compare the decoder query with encoder keys just like in the usual se2seq model. Also the difference is that we have here a layer of so called masked self-attention. It is just the layer at each time stamp we do not compare the query with future keys

0

1

Contributors are:

Who are from:

Tags

Data Science

Foundations of Large Language Models Course

Computing Sciences

Learn After

Core Components of a Transformer Decoding Network

Masked Self-Attention in Transformer Decoders

A developer is building a model designed to generate text sequentially, where each new word is predicted based on the words that came before it. They consider modifying the model by removing the specific constraint that prevents a position in the sequence from attending to subsequent positions. What is the most likely consequence of this change on the model's training and generation capabilities?

A standard Transformer decoder block contains two distinct attention sub-layers. Which statement accurately differentiates the roles and data sources for these two sub-layers?

Within a single decoder block of a standard Transformer architecture, information is processed through three main computational sub-layers. Arrange these sub-layers in the correct operational sequence.