Learn Before

Encoder-Decoder with Transformers

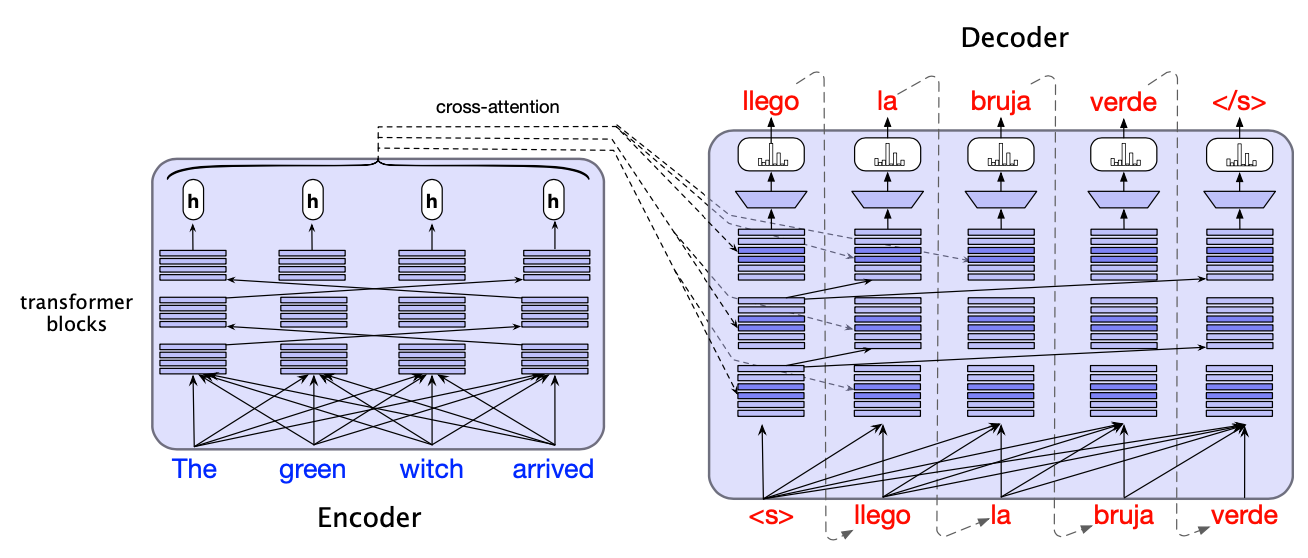

The encoder-decoder architecture can also be implemented using transformers, consisting of: - An encoder that takes the source language input words and maps them to an output representation ; usually via stacked encoder blocks. - A decoder which is similar to the one within the encoder-decoder RNN. However, the decoder transformer block includes an extra cross-attention layer in order to attend to the source language.

0

0

Contributors are:

Who are from:

Tags

Data Science

Related

Encoder

Decoder

Context vector

Encoder-Decoder with Transformers

Multi-lingual Pre-training for Encoder-Decoder Models

Mathematical Formulation of an Encoder-Decoder Model

Seq2seq Models for Text Generation

Auto-Regressive Decoding in Machine Translation

Applying Encoder-Decoder Architectures to NLP via the Text-to-Text Framework

A sequence-to-sequence model is designed to translate English sentences into French. When given the English input, 'The quick brown fox jumps over the lazy dog,' the model produces the French output, 'Où est la bibliothèque?' ('Where is the library?'). The generated French sentence is grammatically perfect and fluent, but it is completely unrelated to the meaning of the English input. Based on this specific failure, which component of the underlying architecture is most likely the primary source of the error?

Diagnosing an Architectural Flaw in a Summarization Model

Arrange the following events to accurately describe the flow of information in a standard encoder-decoder architecture for a sequence-to-sequence task.

Your team is pretraining an internal T5-style enco...

Your company wants one internal model to support m...

Your team is pretraining an internal T5-style mode...

Your team is building a single internal T5-style t...

Diagnosing a T5-Style Model That Ignores Task Prefixes After Span-Denoising Pretraining

Choosing Between Span-Denoising Pretraining and Task-Specific Fine-Tuning in a T5-Style Text-to-Text System

Designing a Unified Text-to-Text Model and Pretraining Objective for Multiple NLP Features

Root-Cause Analysis of a T5-Style Model Producing Fluent but Unfaithful Outputs

Selecting an Architecture and Pretraining Objective for a Unified Internal NLP Service

Post-Pretraining Data Formatting Bug in a T5-Style Text-to-Text Service

Pre-training Encoder-Decoder Models via Masked Language Modeling