Root-Cause Analysis of a T5-Style Model Producing Fluent but Unfaithful Outputs

You are rolling out a single internal NLP service based on an encoder–decoder model in a T5-style text-to-text setup. Every request is formatted as plain text with an instruction prefix (e.g., "summarize:", "translate en->de:", "extract entities:") followed by the user content, and the model always generates a text output.

After pretraining, the team reports a consistent failure mode across multiple downstream tasks: outputs are fluent and on-topic for the instruction, but they often ignore key facts from the provided input. For example:

- Input: "summarize: The incident report states the outage lasted 17 minutes and affected only EU customers." Output: "A brief outage impacted customers for about an hour across multiple regions."

- Input: "extract entities: Contract signed by Acme Corp on 2024-01-12 for $2.3M." Output: "Acme Corp; 2023-12-01; $3.0M"

You inspect the pretraining pipeline and find it uses span-based denoising with sentinel tokens, but the data engineer implemented the decoder target as the entire original uncorrupted text (i.e., the decoder is trained to reproduce the full input sequence), rather than the standard T5-style target that concatenates only the missing spans with their sentinel tokens.

As the model owner, analyze how this specific pretraining-target mistake would change what the encoder and decoder learn in an encoder–decoder network, and explain why that would plausibly lead to the observed "instruction-following but input-unfaithful" behavior in a text-to-text system. Provide one concrete correction to the pretraining objective/format that would directly address the issue.

0

1

Tags

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Data Science

Related

T5 Sample Format

Critique of the T5 Text-to-Text Approach

A developer is using a unified model that frames all natural language processing problems as a text-to-text task. The goal is to build a feature that extracts the main subjects from a sentence. Given the input text 'Instruction: Identify the subjects. Text: The cat and the dog played in the yard.', which of the following outputs best demonstrates the model's core operational principle?

A key principle of a unified text-to-text model is its ability to handle diverse natural language processing tasks by framing them as a transformation from an input text to an output text. Match each traditional NLP task with the most appropriate input/output text pair that represents how this type of model would process it.

Designing a Unified Text-to-Text Model and Pretraining Objective for Multiple NLP Features

Diagnosing a T5-Style Model That Ignores Task Prefixes After Span-Denoising Pretraining

Choosing Between Span-Denoising Pretraining and Task-Specific Fine-Tuning in a T5-Style Text-to-Text System

Selecting an Architecture and Pretraining Objective for a Unified Internal NLP Service

Post-Pretraining Data Formatting Bug in a T5-Style Text-to-Text Service

Root-Cause Analysis of a T5-Style Model Producing Fluent but Unfaithful Outputs

Your team is building a single internal T5-style t...

Your company wants one internal model to support m...

Your team is pretraining an internal T5-style mode...

Your team is pretraining an internal T5-style enco...

Training Process for Text-to-Text Models

T5 Model as a Text-to-Text System

A developer is using a single, unified model that processes all tasks by mapping an input text string to an output text string. The developer wants to perform a summarization task on the following article: 'Jupiter is the fifth planet from the Sun and the largest in the Solar System. It is a gas giant with a mass more than two and a half times that of all the other planets in the Solar System combined.' Which of the following input/output pairs correctly frames this task for such a model?

Evaluating a Unified NLP Approach

A key advantage of the text-to-text framework is its ability to represent a wide variety of Natural Language Processing (NLP) tasks using a single, unified format. Match each traditional NLP task with its corresponding text-to-text formulation.

Your team is pretraining an internal T5-style enco...

Your company wants one internal model to support m...

Your team is pretraining an internal T5-style mode...

Your team is building a single internal T5-style t...

Diagnosing a T5-Style Model That Ignores Task Prefixes After Span-Denoising Pretraining

Choosing Between Span-Denoising Pretraining and Task-Specific Fine-Tuning in a T5-Style Text-to-Text System

Designing a Unified Text-to-Text Model and Pretraining Objective for Multiple NLP Features

Root-Cause Analysis of a T5-Style Model Producing Fluent but Unfaithful Outputs

Selecting an Architecture and Pretraining Objective for a Unified Internal NLP Service

Post-Pretraining Data Formatting Bug in a T5-Style Text-to-Text Service

An encoder-decoder model is being trained with a span-based denoising objective. The encoder is given the following corrupted input text: 'To learn about the solar system, we first study <mask_0> and then move on to <mask_1> planets.' The original, uncorrupted text for the masked spans is '<mask_0>' = 'the Sun' and '<mask_1>' = 'the other'. What should the target output sequence for the decoder be in this training step?

Analysis of Denoising Training Objectives

Debugging a Span-Based Denoising Training Pipeline

Your team is pretraining an internal T5-style enco...

Your company wants one internal model to support m...

Your team is pretraining an internal T5-style mode...

Your team is building a single internal T5-style t...

Diagnosing a T5-Style Model That Ignores Task Prefixes After Span-Denoising Pretraining

Choosing Between Span-Denoising Pretraining and Task-Specific Fine-Tuning in a T5-Style Text-to-Text System

Designing a Unified Text-to-Text Model and Pretraining Objective for Multiple NLP Features

Root-Cause Analysis of a T5-Style Model Producing Fluent but Unfaithful Outputs

Selecting an Architecture and Pretraining Objective for a Unified Internal NLP Service

Post-Pretraining Data Formatting Bug in a T5-Style Text-to-Text Service

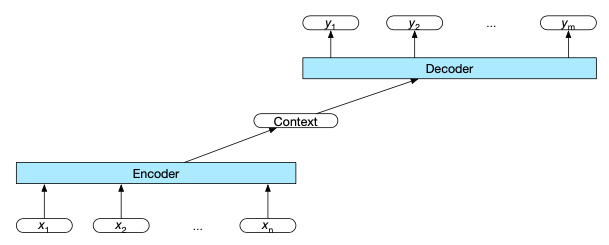

Encoder

Decoder

Context vector

Encoder-Decoder with Transformers

Multi-lingual Pre-training for Encoder-Decoder Models

Mathematical Formulation of an Encoder-Decoder Model

Seq2seq Models for Text Generation

Auto-Regressive Decoding in Machine Translation

Applying Encoder-Decoder Architectures to NLP via the Text-to-Text Framework

A sequence-to-sequence model is designed to translate English sentences into French. When given the English input, 'The quick brown fox jumps over the lazy dog,' the model produces the French output, 'Où est la bibliothèque?' ('Where is the library?'). The generated French sentence is grammatically perfect and fluent, but it is completely unrelated to the meaning of the English input. Based on this specific failure, which component of the underlying architecture is most likely the primary source of the error?

Diagnosing an Architectural Flaw in a Summarization Model

Arrange the following events to accurately describe the flow of information in a standard encoder-decoder architecture for a sequence-to-sequence task.

Your team is pretraining an internal T5-style enco...

Your company wants one internal model to support m...

Your team is pretraining an internal T5-style mode...

Your team is building a single internal T5-style t...

Diagnosing a T5-Style Model That Ignores Task Prefixes After Span-Denoising Pretraining

Choosing Between Span-Denoising Pretraining and Task-Specific Fine-Tuning in a T5-Style Text-to-Text System

Designing a Unified Text-to-Text Model and Pretraining Objective for Multiple NLP Features

Root-Cause Analysis of a T5-Style Model Producing Fluent but Unfaithful Outputs

Selecting an Architecture and Pretraining Objective for a Unified Internal NLP Service

Post-Pretraining Data Formatting Bug in a T5-Style Text-to-Text Service

Pre-training Encoder-Decoder Models via Masked Language Modeling