F1 Score

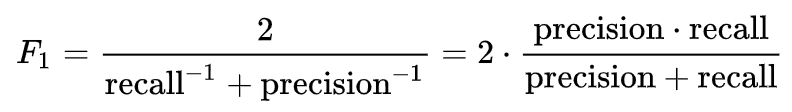

In statistical analysis of a binary classification, the F1 score is a measure of a test's accuracy, defined as the average of precision and recall. It is the harmonic mean of the precision and recall, with the highest possible value being 1.0, indicating perfect precision and recall, and the lowest possible value is 0, if either the precision or the recall is zero.

Some applications for using F1 score include measuring search, document classification, and query classification performance. The F-score has been widely used in the natural language processing literature, such as in the evaluation of named entity recognition and word segmentation.

Some common criticisms of F1 score include that it gives equal importance to recall and precision. It also does not take into account true negatives, thus making it susceptible to unbalanced class bias.

0

1

Contributors are:

Who are from:

Tags

Data Science

Related

Confusion Matrix

ROC Curve and ROC AUC

Precision and Recall performance metrics.

F1 Score

Optimizing Criteria in Classification Problems

Satisficing Criteria in Classification Problems

Bayes error rate

What evaluation metric would you want to maximize based on the following scenario?

Recall of a Classification Model

Precision of a Classification Model

Sensitivity Analysis of a Classification Model

Learning Curve of a Classification Model

Having three evaluation metrics makes it harder for you to quickly choose between two different algorithms, and will slow down the speed with which your team can iterate. True/False?

If you had the four following models, which one would you choose based on the following accuracy, runtime, and memory size criteria?

Coverage

How to choose between precision and recall?

F-Measure

Sensitivity

F1 Score

Relation between Precision and Recall

F-Measure

F1 Score

Maro-average Precision of a Classification Model

Micro-average Precision of a Classification Model

F-Measure