Learn Before

Generalized Formula for Pre-Norm Architecture

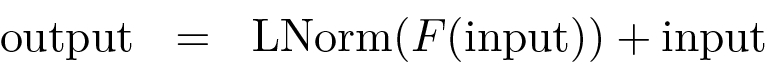

The operation within a sub-layer of a Transformer block using the pre-norm architecture is generalized by the formula: In this equation, F is the sub-layer's function (e.g., self-attention or FFN), and LNorm is Layer Normalization. The input and output are both matrices of size , where is the sequence length and is the representation dimension. Each row in these matrices corresponds to the contextual representation of a specific token in the sequence. This structure applies normalization to the function's output before the residual connection.

0

1

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Generalized Formula for Pre-Norm Architecture

A single sub-layer within a deep neural network processes an input matrix. To improve training stability, a specific architectural pattern is used where a normalization operation is applied to the output of the sub-layer's main function before it is combined with the original input via a residual connection. Arrange the following operations in the correct sequence to reflect this design.

An engineer is training a very deep sequence-processing model and observes that the gradients are becoming unstable, causing the training to fail. The current architecture of each sub-layer in the model computes its output using the formula:

output = Normalize(input + Function(input)). Which of the following modifications to the sub-layer's computational flow is most likely to resolve the instability issue by ensuring a cleaner information flow through the residual connections?Architectural Analysis for Training Stability

You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Core Function in Transformer Sub-layers

Prevalence of Pre-Norm Architecture in LLMs

Vision Transformer Encoder Block

Learn After

A sub-layer within a neural network block is designed to process an input tensor,

X. The computational flow is as follows: first, a primary functionF(such as a self-attention mechanism) is applied toX. Second, a normalization operation is applied to the result of the functionF. Finally, the original input tensorXis added to the normalized result via a residual connection to produce the final output,Y. Which of the following expressions correctly models this specific sequence of operations?Analysis of Sub-Layer Computational Flow

Debugging a Sub-Layer Implementation