Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

You are writing a one-page implementation spec for a new internal Transformer-block API that must be unambiguous enough for two different teams (training + inference) to implement independently and still produce identical tensor shapes and computation order.

Constraints:

- The block input is H ∈ R^{m×d} (m = sequence length, d = model width).

- The block contains exactly two sub-layers in this order: (1) multi-head self-attention, (2) a 2-layer position-wise FFN.

- Multi-head attention uses n_head heads with per-head dimension d_k such that concatenation returns to width d.

- The FFN must expand to hidden width d_h and return to width d using the standard formula FFN(h)=σ(hW_h+b_h)W_f+b_f.

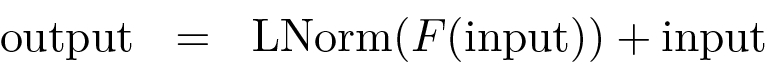

- You must choose either a pre-norm or post-norm scheme and specify precisely where LayerNorm is applied relative to F(·) and the residual addition for BOTH sub-layers.

Create the spec by writing:

- A step-by-step computation graph (as numbered equations) for the full block from input H to output H_out, including residual connections and LayerNorm placement.

- The required matrix dimensions for W_q, W_k, W_v, the output projection W_o, and the FFN matrices W_h and W_f (use d, d_h, n_head, d_k; you may assume d = n_head·d_k).

Your answer must be internally consistent: every addition must be shape-compatible, and your norm placement must match the scheme you chose.

0

1

Tags

Data Science

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Ch.2 Generative Models - Foundations of Large Language Models

Transformer

Related

Self-Attention layer understanding - Step 5 - Adding the time

Query, Key, and Value Projections in Multi-Head Attention

Scalar per Head in Multi-Head Attention

In a multi-head self-attention mechanism, what is the primary advantage of using multiple parallel attention 'heads'—each with its own unique set of learnable weight matrices—compared to using a single attention mechanism with the same total dimensionality?

Analysis of a Modified Attention Mechanism

Arrange the following computational steps of a multi-head self-attention layer in the correct chronological order, starting from the point where the layer receives its input representation matrix.

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

You are reviewing a teammate’s implementation of a...

You’re debugging a Transformer block in an interna...

You’re implementing a single Transformer block in ...

Number of Attention Heads

Reducing KV Cache Complexity via Head Sharing

Tensor Manipulation for Parallel Attention Heads

ReLU (Rectified Linear Unit)

Importance of Activation Function Design in Wide FFNs

In a standard two-layer feed-forward network (FFN) within a Transformer, an input vector

hhas a dimension ofd = 512. The network's hidden layer has a dimension ofd_h = 2048. The FFN is defined by the operation:Output = σ(h * W_h + b_h) * W_f + b_f, whereσis a non-linear activation function. What must be the dimensions of the weight matrixW_ffor the output vector to have the same dimension as the input vectorh?Troubleshooting FFN Dimension Mismatch

A standard Feed-Forward Network (FFN) in a Transformer model processes an input vector

hof dimensiondusing the formula:FFN(h) = σ(h * W_h + b_h) * W_f + b_f. The intermediate hidden layer has a dimensiond_h. Match each component from the formula to its correct description.You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Placement of Layer Normalization in transformers

Substitutes of Layer Normalization in transformers

Normalization-free transformer

Layer Normalization Formula

Root Mean Square (RMS) Layer Normalization

An engineer is training a deep neural network for a language task. They observe that during training, the distribution of the outputs of intermediate layers changes drastically from one step to the next, causing the training process to become very slow and unstable. To mitigate this, they insert an operation that, for each individual data point, computes the mean and variance of all the features in its intermediate representation. It then uses these statistics to standardize the representation before passing it to the next layer. What fundamental problem in deep network training is this operation designed to address?

Restoring Representational Power in Normalization

Applying Layer Normalization

You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Reduction of Covariate Shift via Layer Normalization

Comparison of Layer Normalization and Batch Normalization in NLP

Generalized Formula for Pre-Norm Architecture

A single sub-layer within a deep neural network processes an input matrix. To improve training stability, a specific architectural pattern is used where a normalization operation is applied to the output of the sub-layer's main function before it is combined with the original input via a residual connection. Arrange the following operations in the correct sequence to reflect this design.

An engineer is training a very deep sequence-processing model and observes that the gradients are becoming unstable, causing the training to fail. The current architecture of each sub-layer in the model computes its output using the formula:

output = Normalize(input + Function(input)). Which of the following modifications to the sub-layer's computational flow is most likely to resolve the instability issue by ensuring a cleaner information flow through the residual connections?Architectural Analysis for Training Stability

You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Core Function in Transformer Sub-layers

Prevalence of Pre-Norm Architecture in LLMs

Vision Transformer Encoder Block

A single sub-layer within a neural network block receives an input tensor

xand applies a functionFto it. The block's architecture specifies that a residual connection and layer normalization are used. Which of the following sequences of operations correctly implements the post-normalization scheme for this sub-layer?Generalized Formula for Post-Norm Architecture

A standard processing block in a neural network consists of two main sub-layers: a self-attention module and a feed-forward network (FFN). This block uses a post-normalization architecture, where a residual connection is followed by a normalization step for each sub-layer. Arrange the following computational steps in the correct sequence for a single input passing through one complete block.

Debugging a Transformer Block Implementation

In a Transformer block sub-layer that uses a post-normalization architecture, the layer normalization operation is applied to the input before the sub-layer's primary function (e.g., self-attention or feed-forward network) is executed.

You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Contextual Token Representation in Sub-layers

Core Function in Transformer Sub-layers