Learn Before

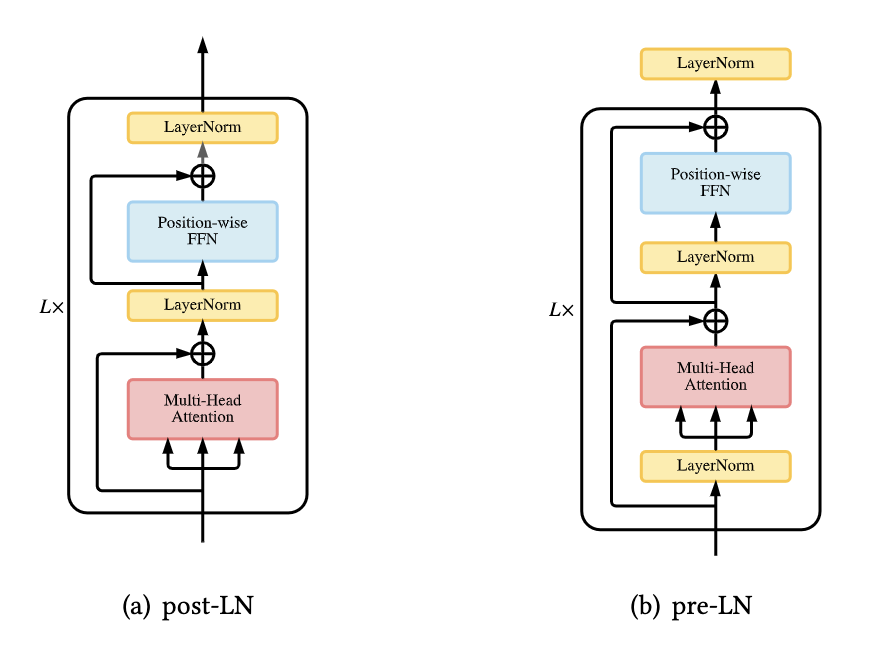

Placement of Layer Normalization in transformers

In vanilla transformers, the LN layer lies between the residual blocks, called post-LN. An improvement to this is called pre-LN, which is when the LN layer is placed inside the residual connection before the attention or FFN, with an additional LN after the final layer to control the magnitude of final outputs. This has shown to eliminate the need for learning-rate warm-up stage.

0

1

Tags

Data Science

Foundations of Large Language Models

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Placement of Layer Normalization in transformers

Substitutes of Layer Normalization in transformers

Normalization-free transformer

Layer Normalization Formula

Root Mean Square (RMS) Layer Normalization

An engineer is training a deep neural network for a language task. They observe that during training, the distribution of the outputs of intermediate layers changes drastically from one step to the next, causing the training process to become very slow and unstable. To mitigate this, they insert an operation that, for each individual data point, computes the mean and variance of all the features in its intermediate representation. It then uses these statistics to standardize the representation before passing it to the next layer. What fundamental problem in deep network training is this operation designed to address?

Restoring Representational Power in Normalization

Applying Layer Normalization

You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Reduction of Covariate Shift via Layer Normalization

Comparison of Layer Normalization and Batch Normalization in NLP