Self-Attention layer understanding - Step 5 - Adding the time

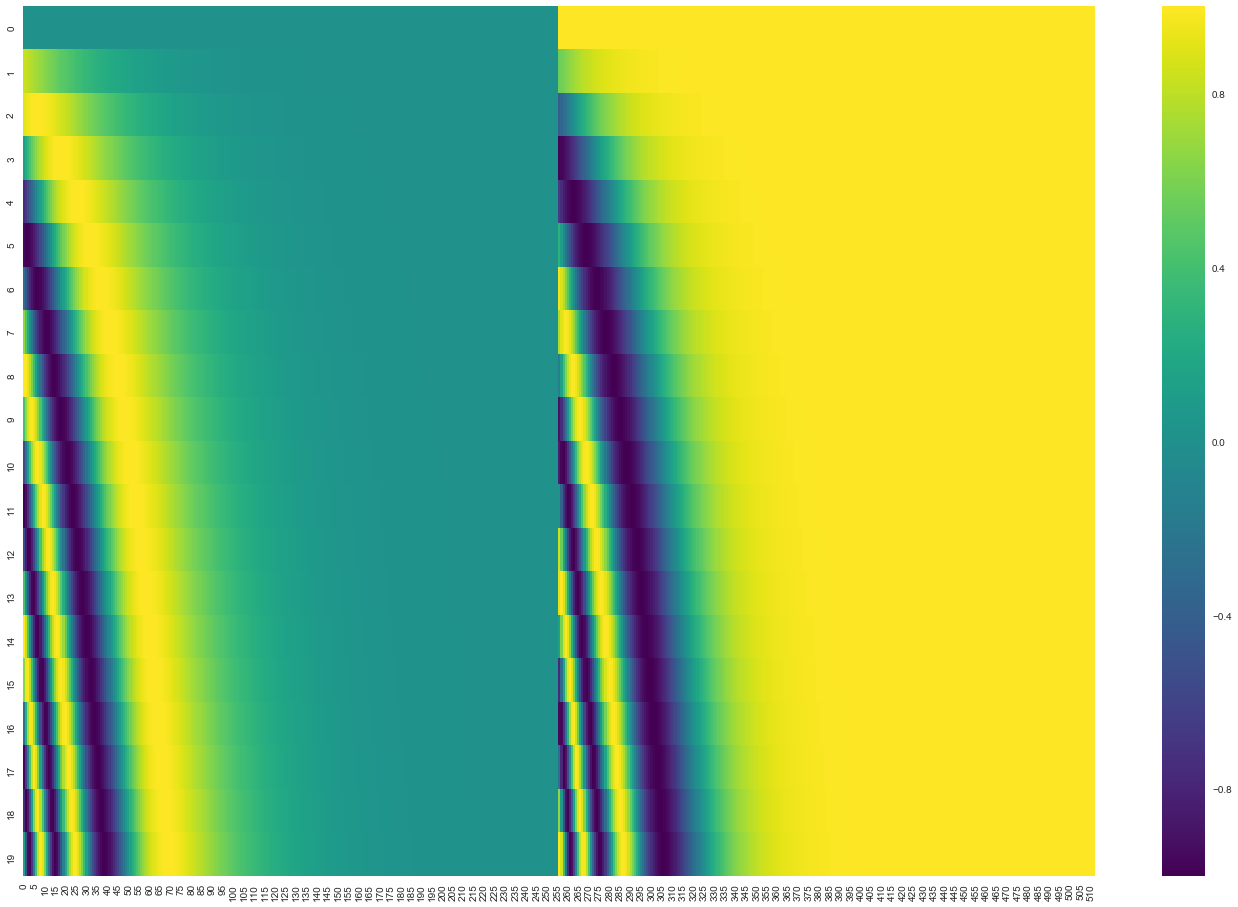

So as you may have noticed in the current state the order of the words do not matter at all. We can permute the sentence but the result would be the same. In this case instead of using RNN to account for the order we can calculate positional encoding for the each timestamp and just add it to the word embeddings(note that we do it once right after the embedding layer). That positional encoding is calculated so that projected vectors into Q/K/V vectors have some meaning full distance in between them. Here is the example of how to it is calculated for the 20 words (rows) with an embedding size of 512 (columns)

0

1

Contributors are:

Who are from:

Tags

Data Science

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Self-Attention layer understanding - Step 5 - Adding the time

Query, Key, and Value Projections in Multi-Head Attention

Scalar per Head in Multi-Head Attention

In a multi-head self-attention mechanism, what is the primary advantage of using multiple parallel attention 'heads'—each with its own unique set of learnable weight matrices—compared to using a single attention mechanism with the same total dimensionality?

Analysis of a Modified Attention Mechanism

Arrange the following computational steps of a multi-head self-attention layer in the correct chronological order, starting from the point where the layer receives its input representation matrix.

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

You are reviewing a teammate’s implementation of a...

You’re debugging a Transformer block in an interna...

You’re implementing a single Transformer block in ...

Number of Attention Heads

Reducing KV Cache Complexity via Head Sharing

Tensor Manipulation for Parallel Attention Heads

Self-Attention layer understanding - Step 5 - Adding the time

Input Embedding with Positional Encoding

Learnable Absolute Positional Embeddings

Initial Input Representation for Transformer Layers

Comparison of Arbitrary Order Prediction and Masked Language Modeling

An engineer builds a language model where all input words in a sentence are processed simultaneously and independently before their information is combined. When testing the model with the sentences 'The cat chased the dog' and 'The dog chased the cat', the engineer observes that the model generates identical internal representations for both, failing to capture their different meanings. Which of the following modifications would most directly address this fundamental flaw?

Model Architecture Design Choice

Analyzing Order-Insensitivity in Language Models

Learn After

Consider a language model that uses a standard self-attention mechanism but lacks any method for encoding word positions. The model is given two distinct input sentences:

Sentence 1: 'A dog chases a cat.' Sentence 2: 'A cat chases a dog.'

After these sentences pass through a single self-attention layer, how would the final output representation for the word 'chases' compare between the two sentences?

An engineer is building a translation model. The core of the model is a mechanism that, for each word, computes a new representation by taking a weighted sum of all other words in the sentence. The engineer observes that the model produces the exact same internal representation for the phrases 'the old man's car' and 'the man's old car'. What is the most probable reason for this behavior?

Debugging a Permutation-Invariant Model