ReLU (Rectified Linear Unit)

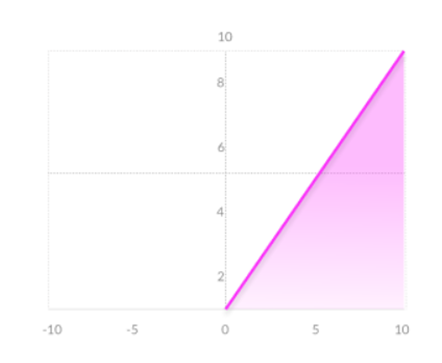

The Rectified Linear Unit (ReLU) is a common choice for the activation function within the hidden layers of neural networks. It is defined to output the positive portion of its argument. When applied to an input vector , the ReLU function is given by the formula: .

0

1

Contributors are:

Who are from:

Tags

Data Science

Foundations of Large Language Models Course

Computing Sciences

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Related

Linear vs. Non-Linear Activation Functions

Sigmoid/Logistic Function

TanH/Hyperbolic Tangent Function

Swish Function

ReLU (Rectified Linear Unit)

ELU (Exponential Linear Unit)

Which activation function is represented by each of these plots?

Which of the following introduces nonlinearity into neural networks?

Softmax Function

ReLU (Rectified Linear Unit)

Importance of Activation Function Design in Wide FFNs

In a standard two-layer feed-forward network (FFN) within a Transformer, an input vector

hhas a dimension ofd = 512. The network's hidden layer has a dimension ofd_h = 2048. The FFN is defined by the operation:Output = σ(h * W_h + b_h) * W_f + b_f, whereσis a non-linear activation function. What must be the dimensions of the weight matrixW_ffor the output vector to have the same dimension as the input vectorh?Troubleshooting FFN Dimension Mismatch

A standard Feed-Forward Network (FFN) in a Transformer model processes an input vector

hof dimensiondusing the formula:FFN(h) = σ(h * W_h + b_h) * W_f + b_f. The intermediate hidden layer has a dimensiond_h. Match each component from the formula to its correct description.You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Learn After

Pros and Cons of ReLU

Leaky ReLU

Parametric ReLU

Derivative of ReLU (Rectified Linear Unit) function

A common non-linear activation function is defined by the operation

f(x) = max(0, x). If this function is applied element-wise to the input vectorh = [2.7, -1.3, 0, -4.5, 8.1], what is the resulting output vector?A neuron in a neural network computes a pre-activation value (the weighted sum of its inputs plus bias) of -2.8. The neuron then applies an activation function defined by the formula

f(z) = max(0, z). Based on this, what will be the neuron's output, and what is the direct consequence for this neuron's learning process during backpropagation for this specific input?A hidden layer in a neural network produces the following vector of pre-activation values for a single neuron across five different training examples:

[-3.1, -0.5, 0.8, 2.4, 5.0]. An activation function defined asf(x) = max(0, x)is then applied to this vector. Which statement best analyzes the effect of this function on the information passed to the next layer?Gaussian Error Linear Unit (GELU)