Learn Before

Concept

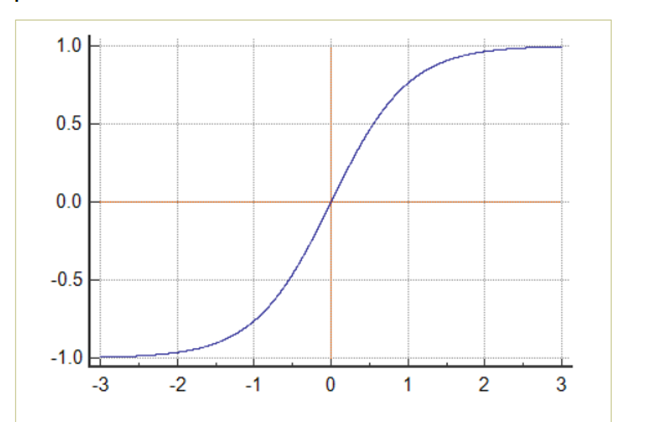

TanH/Hyperbolic Tangent Function

0

1

Updated 2021-11-18

Tags

Data Science

Related

Linear vs. Non-Linear Activation Functions

Sigmoid/Logistic Function

TanH/Hyperbolic Tangent Function

Swish Function

ReLU (Rectified Linear Unit)

ELU (Exponential Linear Unit)

Which activation function is represented by each of these plots?

Which of the following introduces nonlinearity into neural networks?

Softmax Function

Learn After

Pros and Cons of Hyperbolic Tangent Function

Derivative of TanH/Hyperbolic Tangent Function

More on the Tanh function

Sigmoid/Logistic vs. TanH/Hyperbolic Tangent functions

You have built a network using the tanh activation for all the hidden units. You initialize the weights to relative large values, using np.random.randn(..,..)*1000. What will happen?