Learn Before

Swish Function

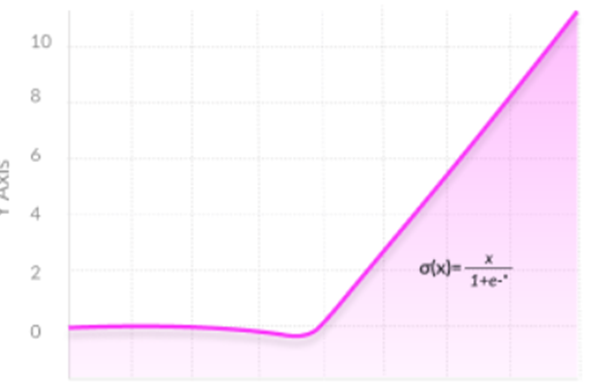

The Swish function, introduced by Ramachandran et al. in 2017, is a mathematical function defined as the product of its input and the sigmoid function applied to a scaled version of the input. For a scalar input , it is expressed as:

where is a constant or a trainable parameter. When applied element-wise to a vector in neural networks, the formula is written as:

where denotes the element-wise product.

0

2

Contributors are:

Who are from:

Tags

Data Science

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Linear vs. Non-Linear Activation Functions

Sigmoid/Logistic Function

TanH/Hyperbolic Tangent Function

Swish Function

ReLU (Rectified Linear Unit)

ELU (Exponential Linear Unit)

Which activation function is represented by each of these plots?

Which of the following introduces nonlinearity into neural networks?

Softmax Function

Learn After

Relationship between Swish Function and other Activation Functions

Consider the function defined as f(x) = x / (1 + e^(-βx)), where β is a positive parameter. Analyze the behavior of this function as the parameter β becomes extremely large (i.e., approaches infinity). Which of the following statements best describes the resulting function's behavior?

Analysis of Swish Function Behavior

Evaluating Activation Function Properties

Swish Function Formula (Ramachandran et al., 2017)