Learn Before

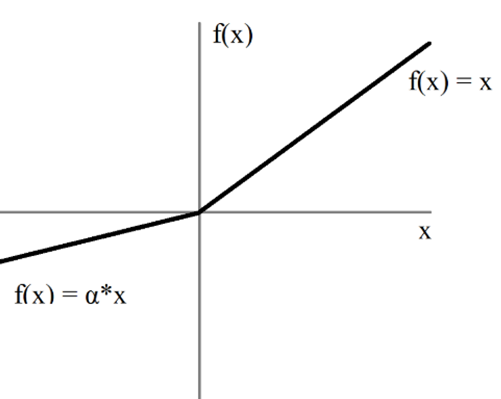

Parametric ReLU

For a ≤ 1, this is equivalent to

0

1

Tags

Data Science

Related

Pros and Cons of ReLU

Leaky ReLU

Parametric ReLU

Derivative of ReLU (Rectified Linear Unit) function

A common non-linear activation function is defined by the operation

f(x) = max(0, x). If this function is applied element-wise to the input vectorh = [2.7, -1.3, 0, -4.5, 8.1], what is the resulting output vector?A neuron in a neural network computes a pre-activation value (the weighted sum of its inputs plus bias) of -2.8. The neuron then applies an activation function defined by the formula

f(z) = max(0, z). Based on this, what will be the neuron's output, and what is the direct consequence for this neuron's learning process during backpropagation for this specific input?A hidden layer in a neural network produces the following vector of pre-activation values for a single neuron across five different training examples:

[-3.1, -0.5, 0.8, 2.4, 5.0]. An activation function defined asf(x) = max(0, x)is then applied to this vector. Which statement best analyzes the effect of this function on the information passed to the next layer?Gaussian Error Linear Unit (GELU)