Gaussian Error Linear Unit (GELU)

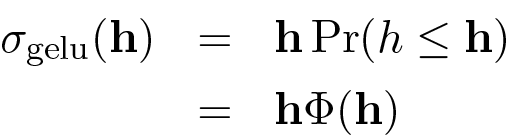

The Gaussian Error Linear Unit (GeLU) is a prominent alternative to the ReLU activation function in Large Language Models (LLMs), effectively acting as a smoothed version of it. Instead of gating outputs strictly by the sign of the input, the GeLU function operates by weighting its input using the percentile . In this formulation, represents a -dimensional vector where each entry is sampled from the standard normal distribution, denoted as , producing a vector of percentiles corresponding to the elements of the input .

0

1

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Gaussian Error Linear Unit (GELU)

Gated Linear Unit (GLU)

A machine learning engineer is analyzing the feed-forward network (FFN) component of a transformer model. They want to replace the standard Rectified Linear Unit (ReLU) activation function with a more modern alternative to potentially improve model performance. Which of the following statements best analyzes the rationale for using a function like the Gaussian Error Linear Unit (GELU) or Swish over ReLU in this context?

Match each activation function, which can be used in the feed-forward network of a transformer model, with its corresponding description.

Evaluating an Activation Function Change in a Transformer FFN

Pros and Cons of ReLU

Leaky ReLU

Parametric ReLU

Derivative of ReLU (Rectified Linear Unit) function

A common non-linear activation function is defined by the operation

f(x) = max(0, x). If this function is applied element-wise to the input vectorh = [2.7, -1.3, 0, -4.5, 8.1], what is the resulting output vector?A neuron in a neural network computes a pre-activation value (the weighted sum of its inputs plus bias) of -2.8. The neuron then applies an activation function defined by the formula

f(z) = max(0, z). Based on this, what will be the neuron's output, and what is the direct consequence for this neuron's learning process during backpropagation for this specific input?A hidden layer in a neural network produces the following vector of pre-activation values for a single neuron across five different training examples:

[-3.1, -0.5, 0.8, 2.4, 5.0]. An activation function defined asf(x) = max(0, x)is then applied to this vector. Which statement best analyzes the effect of this function on the information passed to the next layer?Gaussian Error Linear Unit (GELU)

Learn After

GELU (Gaussian Error Linear Unit) Formula

Applications of GELU in Large Language Models

An activation function is defined by its behavior of weighting an input value by that value's corresponding cumulative probability from a standard normal distribution (mean=0, variance=1). Given two inputs,

x = -3andy = 3, which statement best describes their respective outputs,f(x)andf(y)?Hendrycks and Gimpel [2016] on GELU

An activation function is designed to scale its input value by the probability that a randomly drawn value from a standard normal distribution (mean=0, variance=1) is less than or equal to that input. How does this function's output for a small negative input (e.g., -0.1) compare to the output of a function that simply sets all negative inputs to zero?

Activation Function Selection for a Language Model

Diagnosing Training Instability When Changing Normalization and FFN Activations

Choosing an FFN Activation and Normalization Pair Under Deployment Constraints

Explaining a Distribution Shift Caused by Swapping LayerNorm for RMSNorm and GELU for SwiGLU

Root-Cause Analysis of FFN Output Drift After Swapping Normalization and Activation

Selecting a Normalization + FFN Activation Change After Quantization Regressions

Interpreting Activation/Normalization Interactions from FFN Telemetry

You are reviewing a teammate’s proposed Transforme...

In a transformer feed-forward block, your team is ...

You’re debugging a transformer FFN refactor where ...

You’re reviewing a PR that changes a transformer b...