Learn Before

K-Fold Cross-Validation

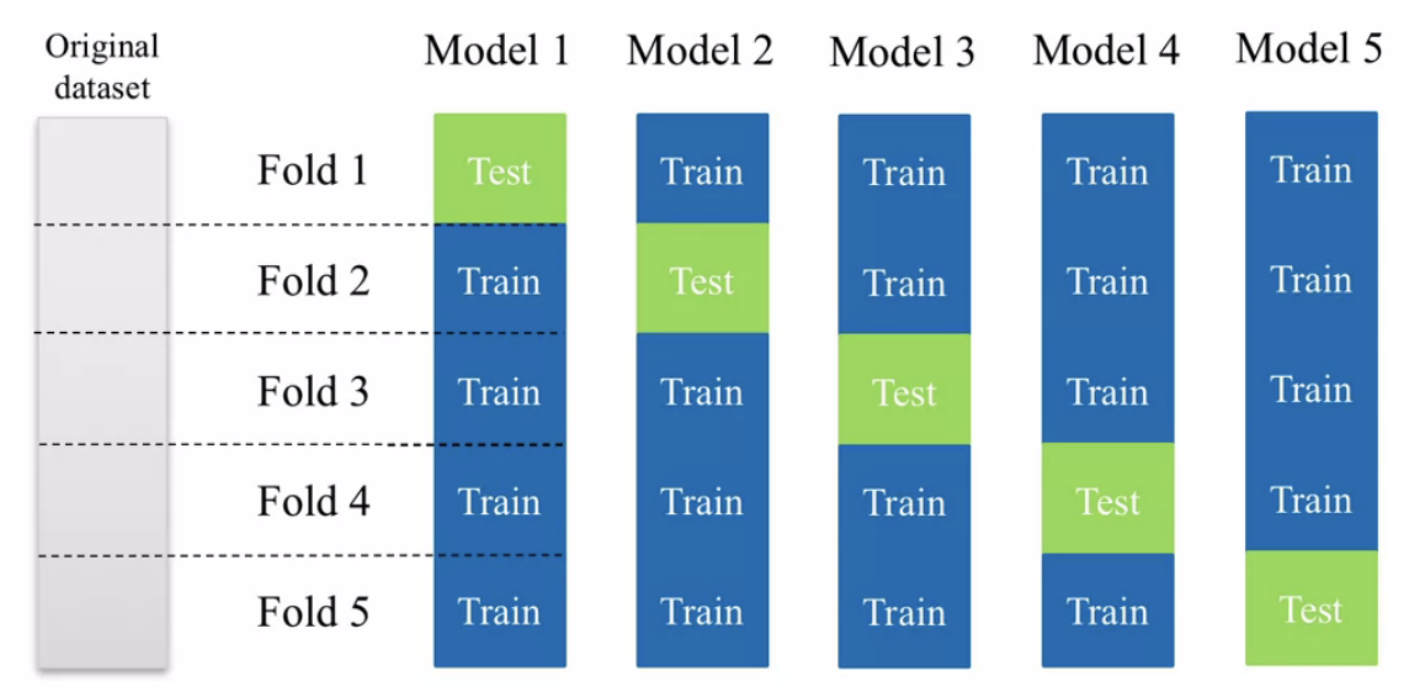

To perform -fold cross-validation on a dataset with observations, the training set is separated into sets by randomly sampling observations from the dataset into each fold. The model of interest is then trained on folds and validated on the left-out fold; that is, once the model is trained, we use it to make predictions on the group that was left out of training. This process repeats times. To evaluate the model's overall performance, we calculate the average validation error across all tests. -fold cross-validation provides a robust estimate of the empirical testing error of a model and is particularly useful for selecting model designs and adjusting hyperparameters.

0

7

Tags

Data Science

D2L

Dive into Deep Learning @ D2L