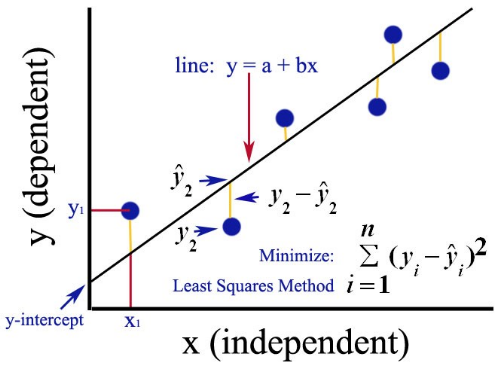

Least Squares Approach

Simple linear regression commonly uses a least squares approach, where the linear model attempts to minimize the residual distance between the actual points and the predicted line. Consequently, its residual is defined as:

where represents the actual ith response value and represents the ith response value of the linear model.

0

5

Contributors are:

Who are from:

Tags

Data Science

Ch.3 Prompting - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Least Squares Approach

Python: Simple Linear Regression

Simple Linear Regression in R: lm()

Image Reference: Simple Linear Regression

Least Squares Approach

Gauss-Markov Theorem (BLUE)

Linear Regression Analytic Solution

Objective Function in Machine Learning

Least Squares Approach

Formal Definition of the Predicted Value (ŷ)

A real estate company uses a machine learning model to estimate the market value of houses. For a specific house with 3 bedrooms and 2,000 square feet of living space, the model calculates an estimated value of $450,000. The house later sells for an actual price of $465,000. In the context of this predictive model, what does the $450,000 figure represent?

Analyzing Model Predictions

Conditional Probability Pr^t(y|c, z)

Analyzing a Predictive Model's Performance

Linear Regression Analytic Solution

Learn After

Residual Sum of Squares (RSS)

A data scientist is building a simple linear model for a set of data points. Four potential lines are generated to fit the data. According to the least squares approach, which of the following lines would be considered the best fit for the data?

Calculating a Residual

Evaluating a Linear Model's Fit