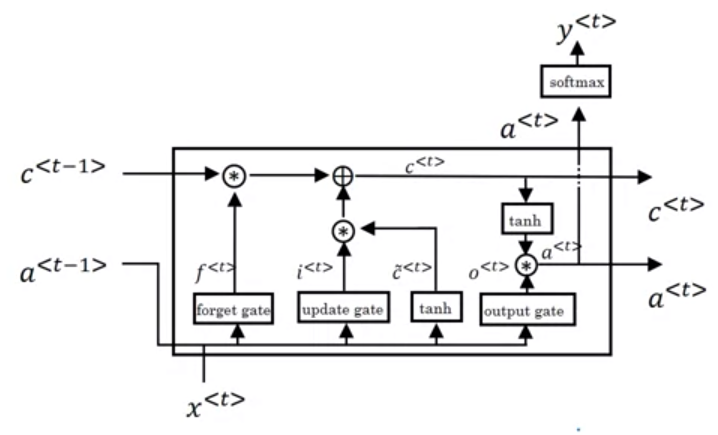

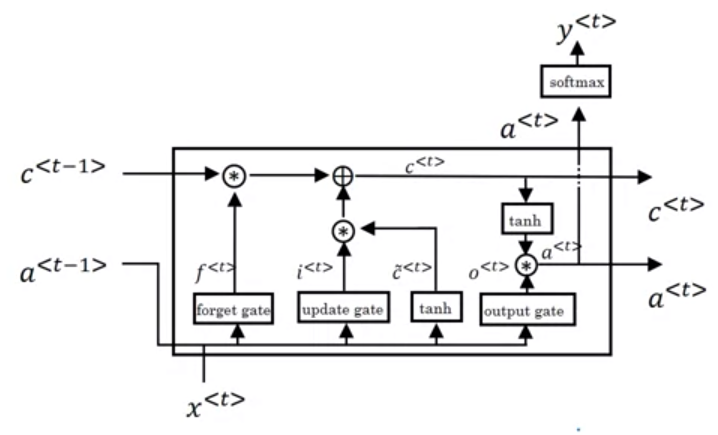

The forget gate is

\Gamma_f=\sigma(W_f[a^{}, x^{}]+b_f)

, where \sigma denotes the sigmoid activation function, W_f is weight, b_f is a bias term, a denotes the hidden state, t is the t-th time/neuron, and [a^{}, x^{}] means a^{} and x^{} are concatenated together.

Then compute the update gate in two steps. First, the update gate is

\Gamma_u=\sigma(W_u[a^{}, x^{}]+b_u)

Second, the intermediate cell state candidate is

\tilde c^{}=tanh(W_c[a^{}, x^{}]+b_c)

, where tanh denotes the tanh activation function.

Using the results from formulas above, we can calculate the current cell state,

c^{}=\Gamma_u*\tilde c^{}+\Gamma_fc^{}

At last, the third gate, output gate is

\Gamma_o=\sigma(W_o[a^{}, x^{}]+b_o)

, and using the output gate and the current cell state, we can compute the current hidden state

a^{}=\Gamma_otanh(c^{})