Learn Before

Concept

The Encapsulated Complexity of LSTMs

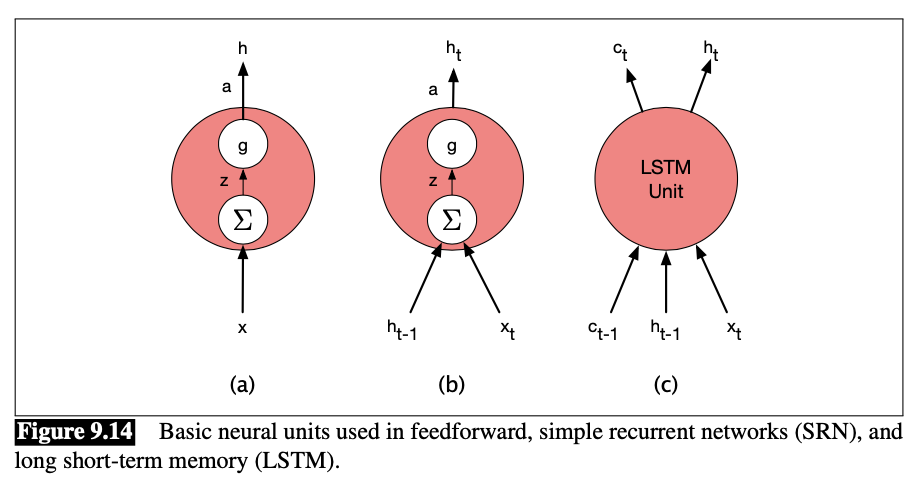

The neural units used in LSTMs are obviously much more complex than those used in basic feedforward networks. Fortunately, this complexity is encapsulated within the basic processing units, allowing us to maintain modularity and easily experiment with different architectures. The figure below demonstrates this by illustrating the inputs and outputs associated with each kind of unit.

The increased complexity of the LSTM units is encapsulated within the unit itself. The only additional external complexity for the LSTM over the basic recurrent unit is the presence of the additional context vector as an input and output.

0

1

Updated 2021-11-14

Tags

Data Science