Learn Before

Related Work (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Knowledge Tracing (Deep-IRT: Make Deep Learning Based Knowledge Tracing Explainable Using Item Response Theory)

Related Work (Knowledge Tracing Machines: Factorization Machines for Knowledge Tracing)

Knowledge Tracing Machines (Knowledge Tracing Machines: Factorization Machines for Knowledge Tracing)

Modeling Student Learning and Forgetting (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

As it was written in the paper, the are two main approaches to modeling student learning namely: knowledge tracing and factor analysis.

Knowledge tracing as it can clear from the name of this approach, here we trace student's knowledge and we model the development of student knowledge to predict the sequence of answers. Bayesian Knowledge Tracing model is the most famous model of knowledge tracing.

Factor Analysis compared to knowledge tracing doesn't care about the order of observations.

- Item Response Theory (IRT) is factor analysis model:

Here denotes student's ability and item difficulty. (it is assumed that student ability is unchangeable, it is fixed). At first glance this might seem very simple model but it is able to learn better the Knowledge Tracing architectures like Deep Knowledge Tracing.

- Multidimensional Item Response Theory (MIRT) extension of IRT:

Here and denote exactly the same with only one difference, here we have multidimensional vectors and captures the easiness of the item j.

In recent works, student history was also used in factor analysis and the following models where created: Additive Factor Model (AFM) and Performance Factor Analysis (PFA).

The formula for the AFM looks as:

here denotes bias for skill, is for the number of attempts and the is for the bias

c (f) is for the number of correct (wrong) answers.

Knowledge Tracing Machine (KTM) incorporates IRT, MIRT, AFM and PFA. The probability of correctness is expressed as:

(NOTE this model was proposed in the paper: Knowledge Tracing Machines: Factorization Machines for Knowledge Tracing)

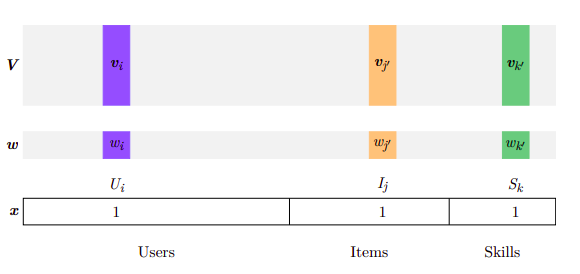

Here is a global bias, N - the number of abstract features, ( for instance, item parameters)., - a sample gathering all features collected at time t: which student answers which item, and information regarding prior attempts (x is a sparse vector), is the bias of feature i (w is the vector of biases) and its embedding of dimension d. In the prediction will contribute only those that are greater than 0. The features involved in a sample are typically in sparse number, so this probability can be computed efficiently.

DASH(Difficulty Ability and Student History) bridges the gap between factor analysis and memory models. Here both learning and forgetting processes are taken into account.

The authors plan to extend DASH so that it can account for both multiple skills tagging and memory decay.

This is the example of activation of a knowledge tracing machine:

0

1

Tags

Data Science

Related

General (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Adaptive Spacing Algorithms (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Modeling Student Learning and Forgetting (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Modeling Student Learning and Forgetting (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Bayesian Based Knowledge Tracing (Deep-IRT: Make Deep Learning Based Knowledge Tracing Explainable Using Item Response Theory)

Factory Analysis Based Knowledge Tracing (Deep-IRT: Make Deep Learning Based Knowledge Tracing Explainable Using Item Response Theory)

Deep Learning Based Knowledge Tracing (Deep-IRT: Make Deep Learning Based Knowledge Tracing Explainable Using Item Response Theory)

Modeling Student Learning and Forgetting (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Factorization Machines

Modeling Student Learning and Forgetting (DAS3H: Modeling Student Learning and Forgetting for Optimally Scheduling Distributed Practice of Skills)

Data and encoding of side information (Knowledge Tracing Machines: Factorization Machines for Knowledge Tracing)

Relation to existing models (Knowledge Tracing Machines: Factorization Machines for Knowledge Tracing)

Training (Knowledge Tracing Machines: Factorization Machines for Knowledge Tracing)