Overfitting/Underfitting vs. Bias/Variance in Supervised Machine Learning

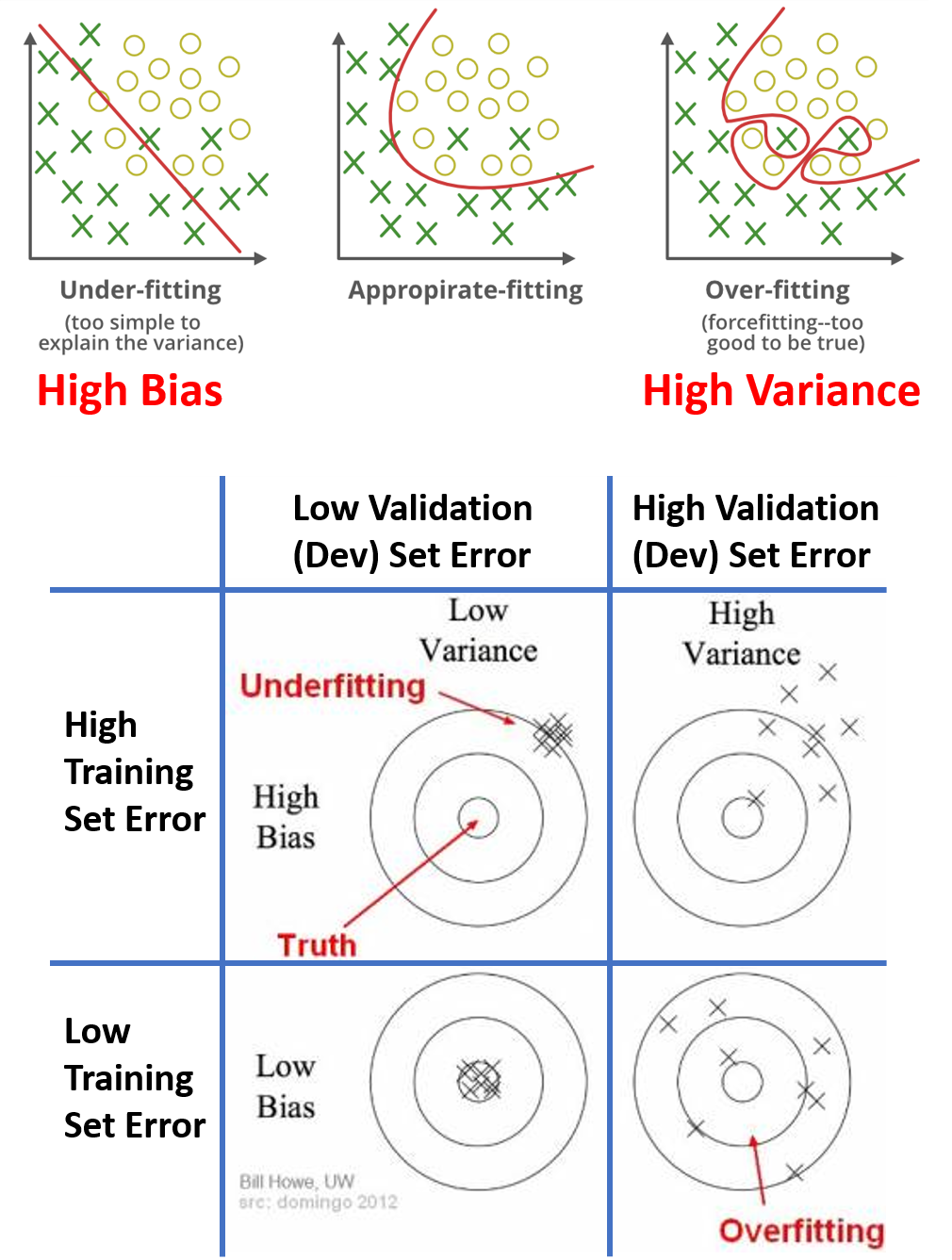

The left plot shows fitting a straight line to the data. This is not a very good fit. So, it is underfitting the data and we call it high bias. On the opposite end, if you fit an incredibly complex classifier, maybe you can fit the data perfectly, but that doesn't look like a great fit either. So there's a classifier of high variance and this is overfitting the data. And there might be some classifier in between, with a medium level of complexity, that maybe fits it correctly.

0

2

Tags

Data Science

Related

Overfitting/Underfitting vs. Bias/Variance in Supervised Machine Learning

Which of the following would be the best choice for the next ridge regression model you train?

How to tell when your model is overfitting

Overfitting/Underfitting vs. Bias/Variance in Supervised Machine Learning

Which of the following would be the best choice for the next ridge regression model you train?

How to avoid overfitting

Generalization Paradox in Deep Learning

Overfitting/Underfitting vs. Bias/Variance in Supervised Machine Learning

towardsdatascience.com: Understanding the Bias-Variance Tradeoff

Overfitting/Underfitting vs. Bias/Variance in Supervised Machine Learning

Learn After

Chain of Assumptions in Supervised Statistical Learning

Understanding the Bias-Variance Tradeoff

Relationship between Capacity, Training and Test Errors, Bias-Variance Tradeoff in Machine learning

What is the issue with higher-order polynomials (high model complexity) in regard to fitting the training data and test data?

Under which circumstances will getting more training data help a learning algorithm to perform better?