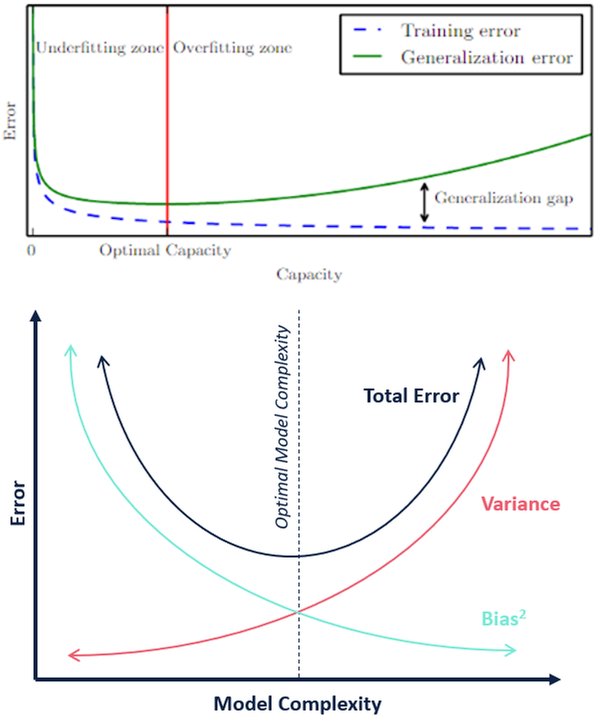

Relationship between Capacity, Training and Test Errors, Bias-Variance Tradeoff in Machine learning

Training and test error behave differently. At the left end of the graph, training error and test error are both high. This is the underfitting regime. As we increase capacity, training error decreases, but the gap between training and generalization error increases. Eventually, the size of this gap outweighs the decrease in training error, and we enter the overfitting regime, where capacity is too large, above the optimal capacity.

0

2

Tags

Data Science

Related

Chain of Assumptions in Supervised Statistical Learning

Understanding the Bias-Variance Tradeoff

Relationship between Capacity, Training and Test Errors, Bias-Variance Tradeoff in Machine learning

What is the issue with higher-order polynomials (high model complexity) in regard to fitting the training data and test data?

Under which circumstances will getting more training data help a learning algorithm to perform better?