Learn Before

Relation

Probability Distribution and Generative Learning

- On its forward pass, an RBM uses inputs to make predictions about node activations.

- On its backward pass, when activations are fed in and reconstructions, or guesses about the original data, are spit out, an RBM is attempting to estimate the probability of inputs given activations of hidden layer, which are weighted with the same coefficients as those used on the forward pass.

- Reconstruction is making guesses about the probability distribution of the original input; i.e. the values of many varied points at once. This is known as generative learning.

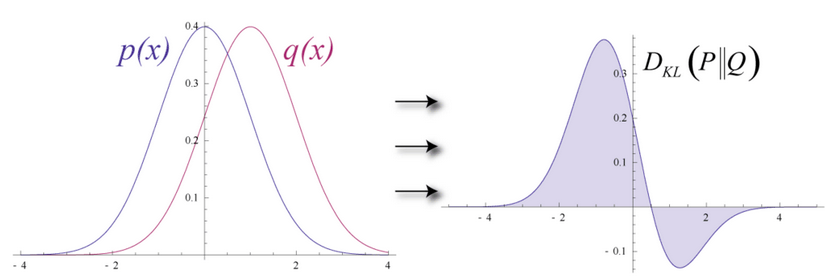

- To measure the distance between its estimated probability distribution and the ground-truth distribution of the input, RBMs use Kullback Leibler Divergence.

- KL-Divergence measures the non-overlapping, or diverging, areas under the two curves, and an RBM’s optimization algorithm attempts to minimize those areas so that the shared weights, when multiplied by activations of hidden layer one, produce a close approximation of the original input.

0

1

Updated 2021-07-14

Tags

Data Science