Learn Before

Relation

Structure of Restricted Boltzmann Machine

- Restricted Boltzmann Machines are shallow, two-layer neural nets that constitute the building blocks of deep-belief networks. The first layer of the RBM is called the visible, or input layer, and the second is the hidden layer.

- Forward Pass: Each node of the visible layer is multiplied by a separate weight, the products are summed, added to a bias, and the result is passed through an activation function to produce the node’s output (the hidden layer).

- Because inputs from all visible nodes are being passed to all hidden nodes, an RBM can be defined as a symmetrical bipartite graph.

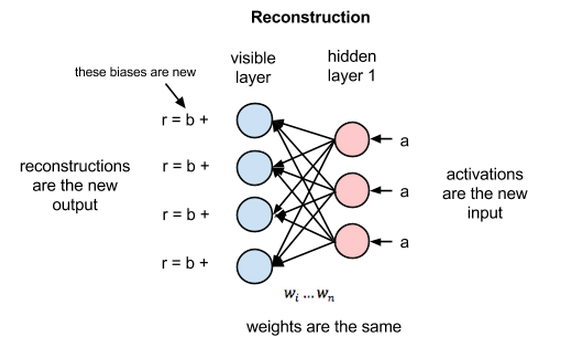

- Reconstruction phase: the activations of hidden layer become the input in a backward pass. They are multiplied by the same weights, one per internode edge. The sum of those products is added to a visible-layer bias at each visible node, and the output of those operations is a reconstruction; i.e. an approximation of the original input. This can be represented by the following diagram:

0

1

Updated 2021-07-29

Tags

Data Science