Learn Before

Concept

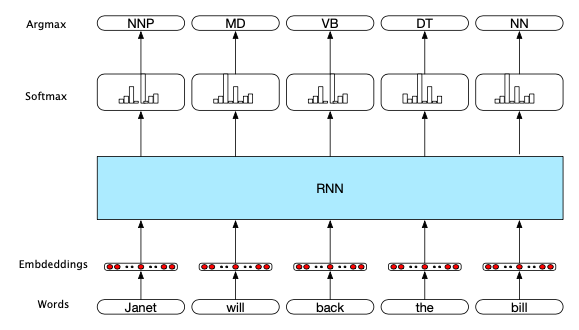

RNN's Approach to Sequence Labeling in NLP

In the context of NLP, to assign a label to each word(token) of a sequence, each word was first transformed to its corresponding embedding as the input for the RNN model, each RNN block contains an unrolled simple recurrent network, as well as the weight matrices. After forward inference, the probability distributions for the provided label candidates were generated in a softmax layer, the most likely label was selected in the softmax layer at each step; Cross-entropy is applied as the loss function. In POS tagging. Pre-trained word embeddings serve as inputs.

0

1

Updated 2021-11-14

Tags

Data Science