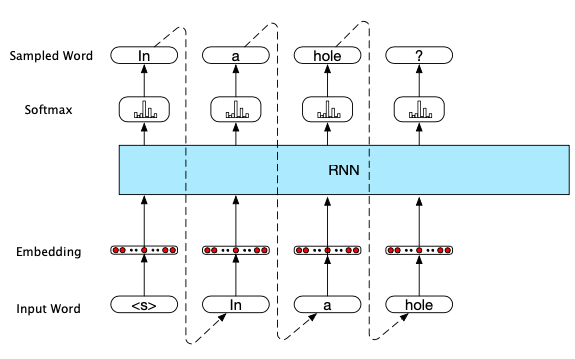

RNN's Approach to Text Generation in NLP (Autoregressive Generation)

The technique (Shannon, 1951) generates a word in a sequence given the previous words, using cross-entropy as the loss function and perplexity as the evaluation method. The sequence ends once it reaches a predefined length. Each softmax layer is to decide the next word based on the current hidden state in the RNN component. The initial input token(s) would depend on the NLP task's nature (e.g. machine translation, question answering, summarization).

0

2

Tags

Data Science

Related

Sequence labeling: named entity recognition

RNN's Approach to Text Generation in NLP (Autoregressive Generation)

RNN's Approach to Sequence Labeling in NLP

RNN's Approach to Sequence Classification in NLP

RNN's Approach to Text Generation in NLP (Autoregressive Generation)

Autocorrection

RNN's Approach to Text Generation in NLP (Autoregressive Generation)

A Survey of Code-switching: Linguistic and Social Perspectives for Language Technologie

Human Evaluation of Creative NLG Systems: An Interdisciplinary Survey on Recent Papers

Goals of the NLG survey

NLG tasks.

GRL Book

A survey of embedding models of entities and relationships for knowledge graph completion

Classification of Text Generation Problems in NLP