Learn Before

Self- Attention layer understanding - Step 1 - Getting rid of RNN

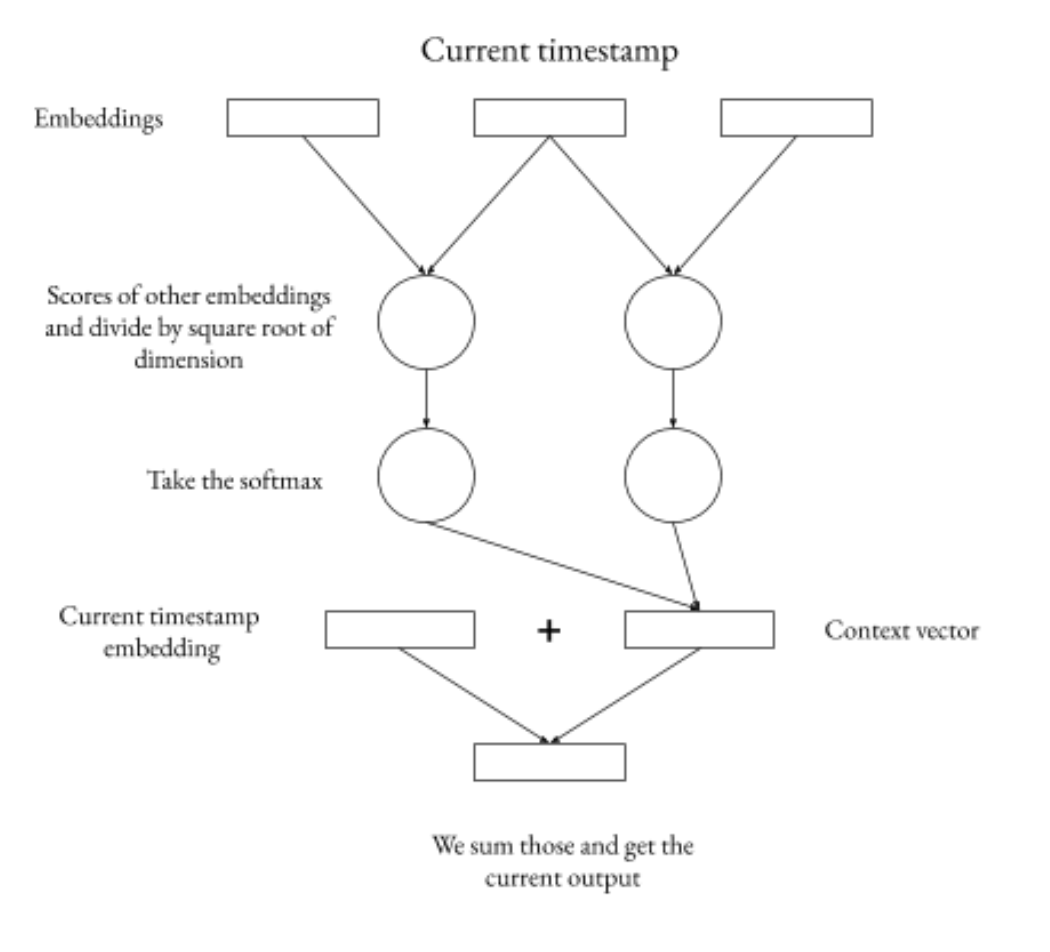

Note this is not how the actual self -attention layer in Transformer works but just modification of the seq_to_seq encoder. So over as the first step let’s just get rid of the RNNs used in seq2seq. For each word embedding we can score all others based on the dot attention score we already saw before. Each of those vectors we need to divide by square root of the dimension of the input vectors to the score function. This trick should help gradients be more stable. Then we take a softmax of those. And then calculate the weighted sum of the other embeddings based on those softmax scores. Add this vector to the embedding vector of the current word and we get the output for the current timestamp for the self-attention layer.

0

1

Tags

Data Science