Self-Attention layer understanding - Step 2 - Keys, Queries

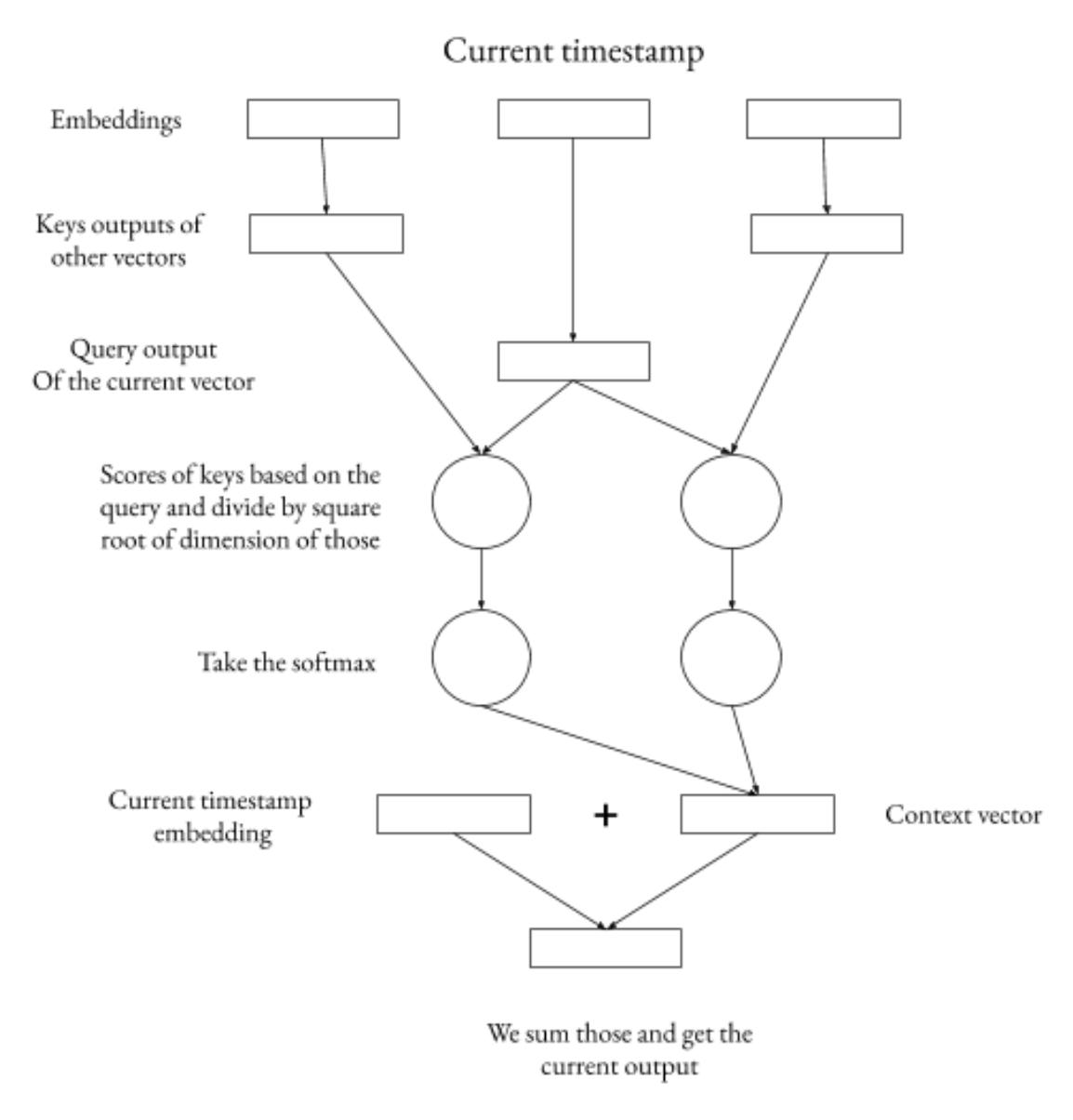

Now if we look at the previous modification. In this case the words similar to the current one will have bigger scores for words that are just similar to the current ones. We want to have the relevant words to have a big score rather than similar. So at this step instead of taking the dot product of the actual embedding not we pass those embeddings through a usual Dense neural network(no activation function) before calculating the scores. This matrix, MLP, is called the Key matrix. Also I would be good that at each time stamp we would pass the current vector through another matrix rather than Keys(because if we just pass it through Keys and the end the scores will also be just taken on the account of the similarity in between vectors). We call it the Query matrix/ MLP. So at each step we pass the current vector through the Query neural network and all other vectors through the Keys natural network. And then process just goes as before:

0

1

Tags

Data Science