Learn Before

Transformer Encoder Stack

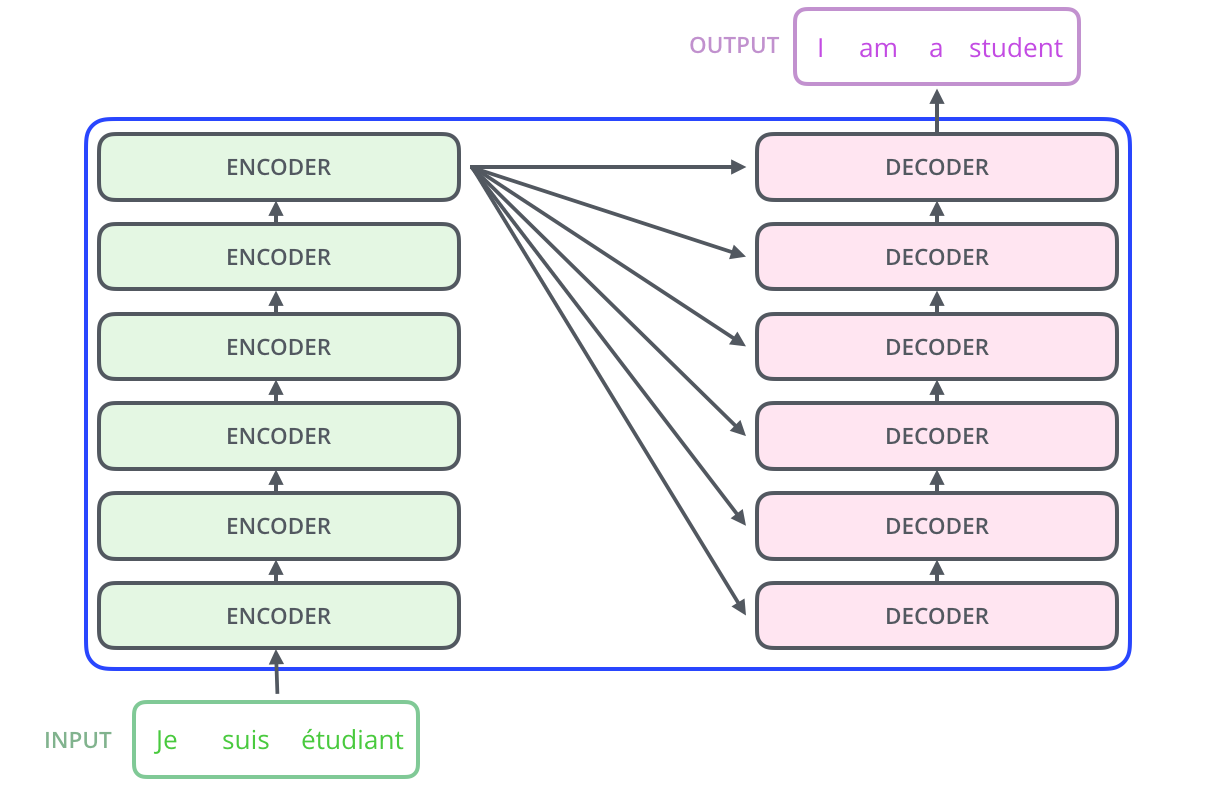

The Transformer encoder is structured as a stack of multiple identical layers. It processes an input sequence that has been combined with positional encoding, and ultimately outputs a -dimensional vector representation for every position within that source sequence.

0

1

Contributors are:

Who are from:

Tags

Data Science

Foundations of Large Language Models Course

Computing Sciences

D2L

Dive into Deep Learning @ D2L

Learn After

Standard Transformer Encoding Procedure

Key Hyperparameters of a Transformer Encoder

Transformer Encoding of a Masked Bilingual Sentence Pair

Prefix Tuning

In a sequence-to-sequence model, the input is processed by a stack of six encoder layers that have identical structures. A proposal is made to modify this architecture so that all six encoder layers share the exact same set of weights, with the goal of reducing the total number of model parameters. Which statement best analyzes the primary consequence of this change on the model's ability to process information?

A sentence is fed into the encoder side of a Transformer model. Arrange the following steps in the correct sequence to describe how the initial input is processed by the stack of encoders.

Improving a Transformer's Contextual Understanding

Positional Encoding

Transformer Encoder Sublayers