Logistic Regression Gradient Descent Derivation

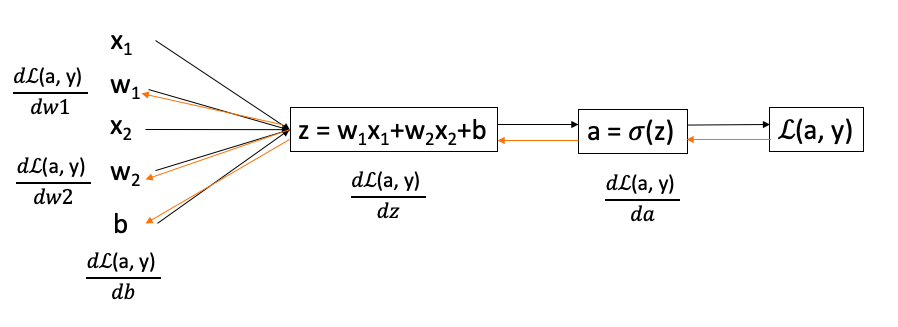

If we only have two features, and , in order to minimize the loss function, we can apply gradient descent to update , , and . To compute the derivatives of with respect to , , and , we need to compute the derivatives of with respect to and first.

0

1

Contributors are:

Who are from:

Tags

Data Science

Related

Logistic regression loss function vs. cost function

Logistic Regression Cost Function

A machine learning model is trained for a binary classification task where the goal is to predict a label

y(either 0 or 1). The model's prediction,ŷ, is a probability between 0 and 1. The performance on a single example is measured using the loss function:L(ŷ, y) = -(y*log(ŷ) + (1 - y)*log(1 - ŷ)).Consider two scenarios for an example where the true label

yis 1:- Scenario A: The model predicts

ŷ = 0.9. - Scenario B: The model predicts

ŷ = 0.1.

Which scenario results in a higher loss value, and why?

- Scenario A: The model predicts

When training a logistic regression model for binary classification, the standard approach is to use the logarithmic loss function:

L(ŷ, y) = -(y*log(ŷ) + (1 - y)*log(1 - ŷ)). An alternative could be the squared error loss:L(ŷ, y) = (ŷ - y)². What is the primary reason the logarithmic loss is preferred for this task?Calculating Loss for a Single Prediction

Logistic Regression Gradient Descent Derivation

Derivation of the Gradient Descent Formula

Mini-Batch Gradient Descent

Epoch in Gradient Descent

Gradient Descent with Momentum

For logistic regression, the gradient is given by ∂∂θjJ(θ)=1m∑mi=1(hθ(x(i))−y(i))x(i)j. Which of these is a correct gradient descent update for logistic regression with a learning rate of α?

Suppose you have the following training set, and fit a logistic regression classifier .

Backpropagation

Batch vs Stochastic vs Mini-Batch Gradient Descent

Logistic Regression Gradient Descent Derivation

Logistic Regression Gradient Descent Derivation